Introduction

Neo4j 3.x introduced the concept of user-defined procedures and functions. Those are custom implementations of certain functionality, that can’t be (easily) expressed in Cypher itself. They are implemented in Java and can be easily deployed into your Neo4j instance, and then be called from Cypher directly.

The APOC library consists of many (about 450) procedures and functions to help with many different tasks in areas like data integration, graph algorithms or data conversion.

License

Apache License 2.0

"APOC" Name history

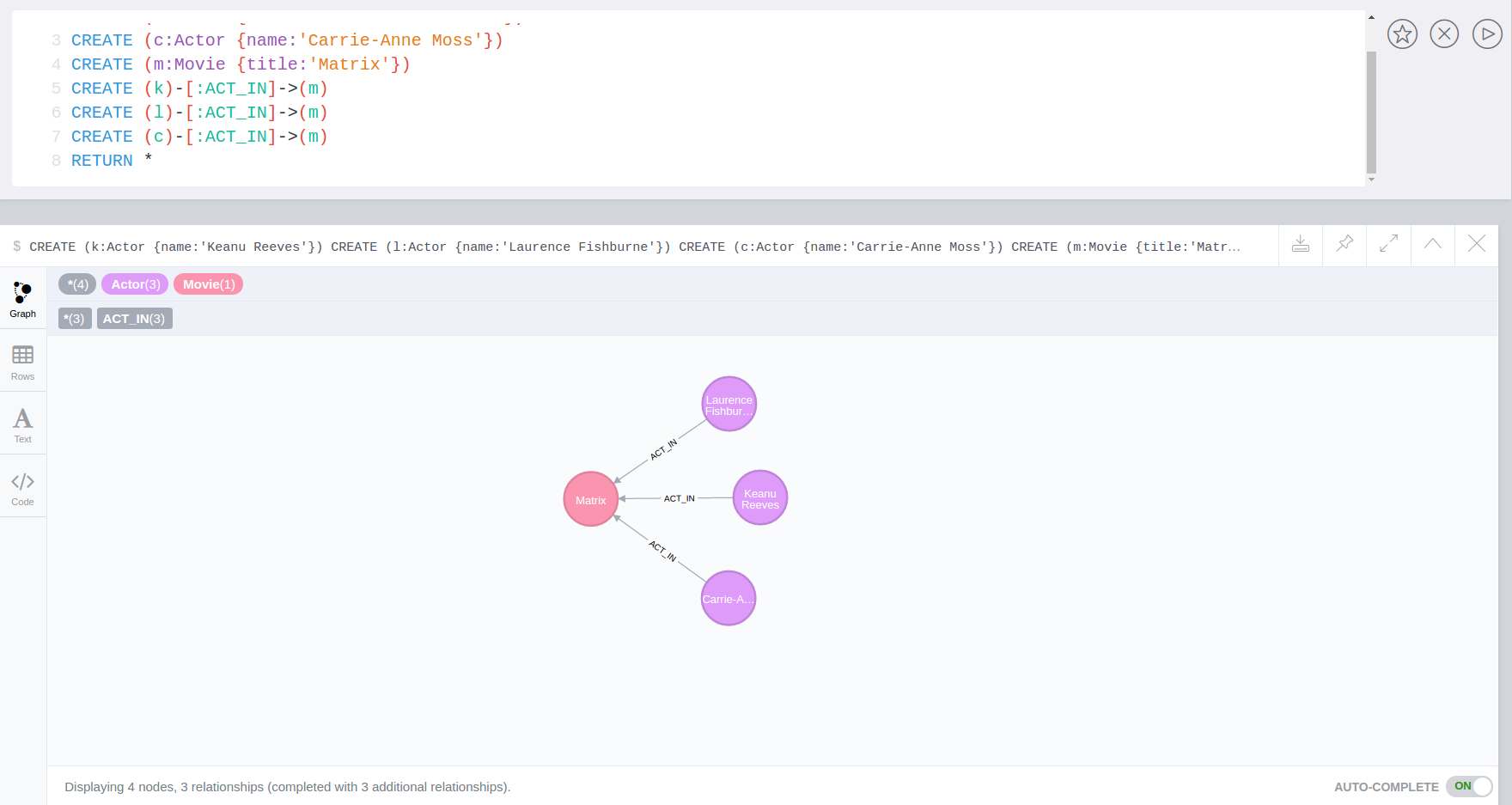

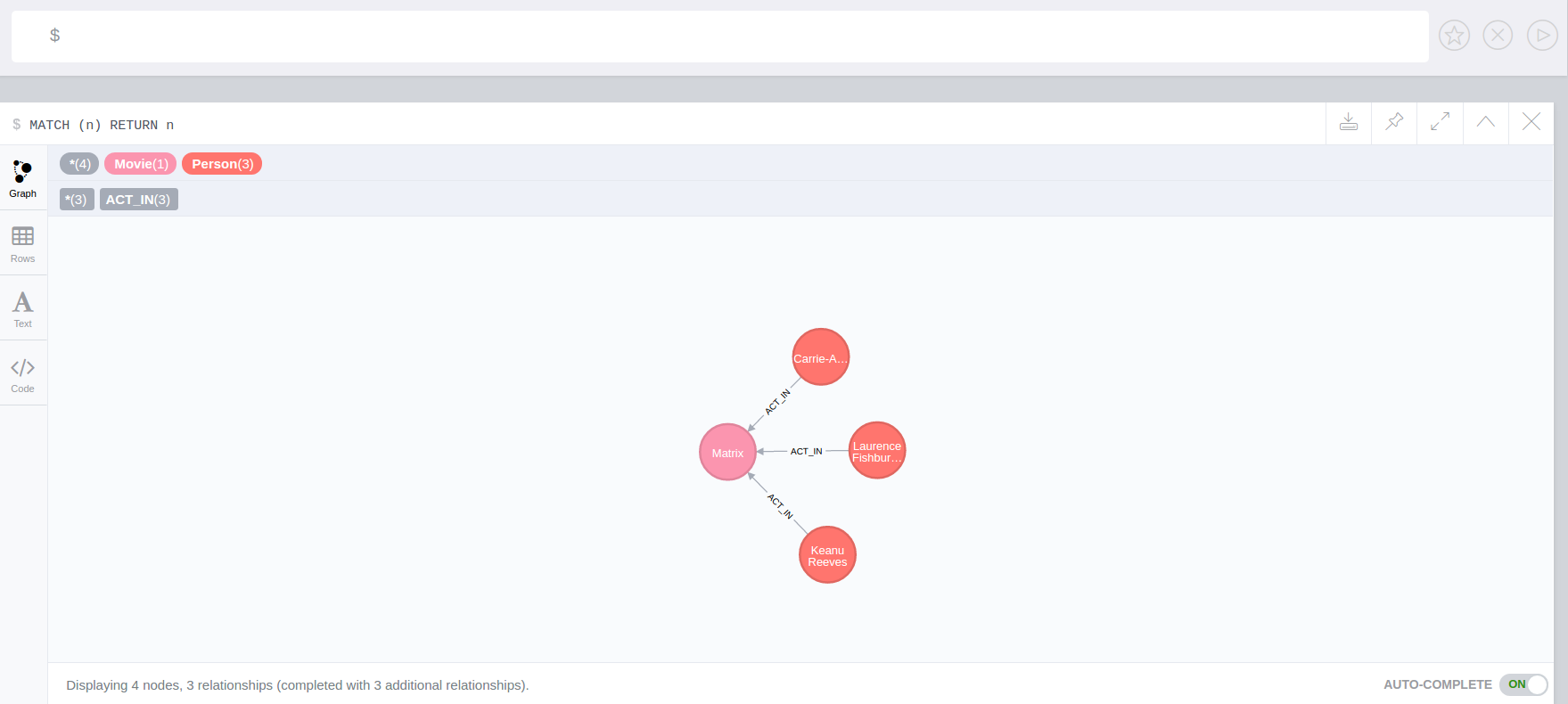

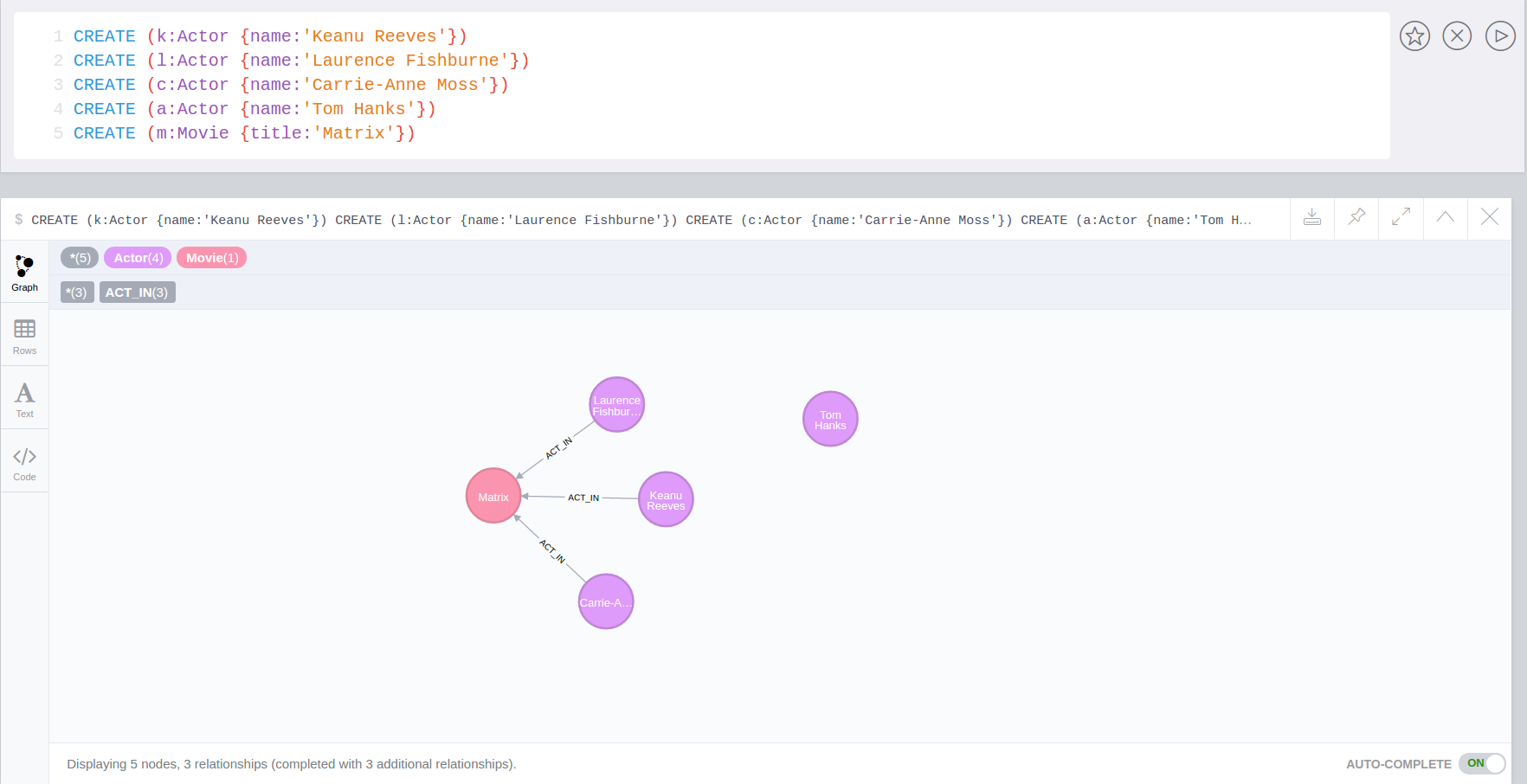

Apoc was the technician and driver on board of the Nebuchadnezzar in the Matrix movie. He was killed by Cypher.

APOC was also the first bundled A Package Of Component for Neo4j in 2009.

APOC also stands for "Awesome Procedures On Cypher"

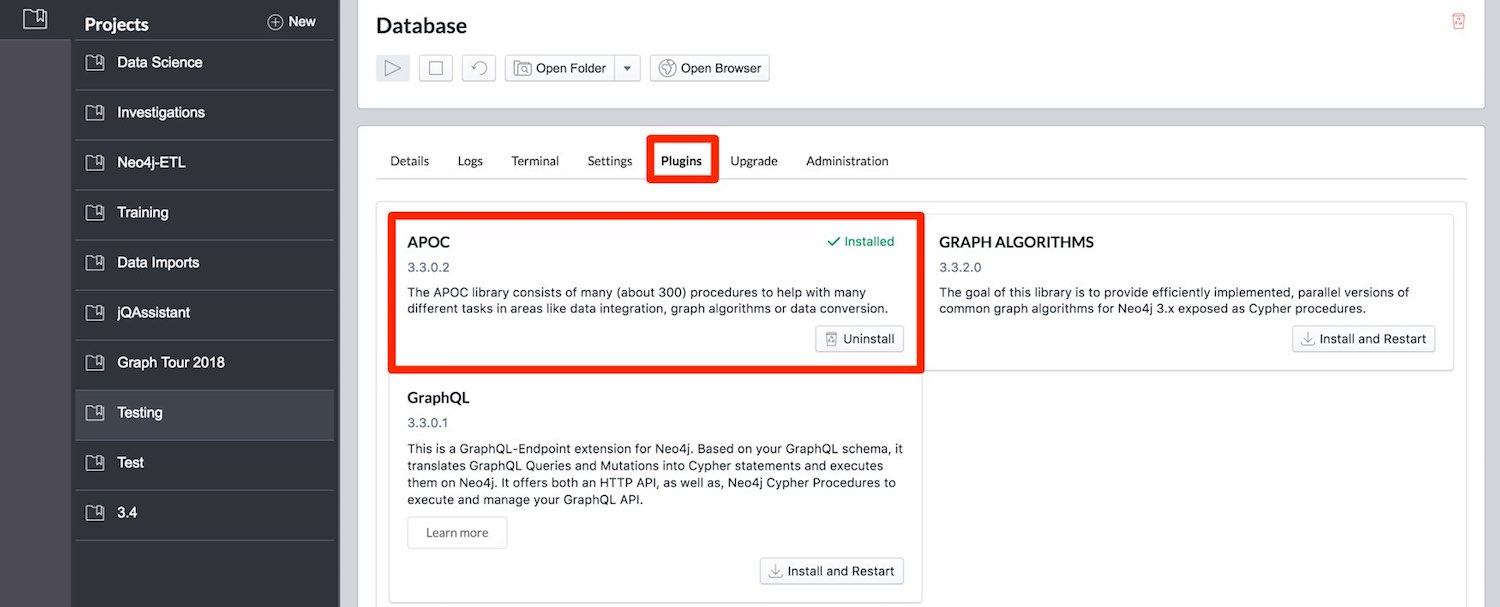

Installation: With Neo4j Desktop

APOC is easily installed with Neo4j Desktop, after creating your database just go to the "Manage" screen and the "Plugins" tab. Then click "Install" in the APOC box and you’re done.

Feedback

Please provide feedback and report bugs as GitHub issues or join the neo4j-users Slack and ask on the #apoc channel.

You might also ask on StackOverflow, please tag your question there with neo4j and apoc.

Calling Procedures & Functions within Cypher

User defined Functions can be used in any expression or predicate, just like built-in functions.

Procedures can be called stand-alone with CALL procedure.name();

But you can also integrate them into your Cypher statements which makes them so much more powerful.

WITH 'https://raw.githubusercontent.com/neo4j-contrib/neo4j-apoc-procedures/{branch}/src/test/resources/person.json' AS url

CALL apoc.load.json(url) YIELD value as person

MERGE (p:Person {name:person.name})

ON CREATE SET p.age = person.age, p.children = size(person.children)Procedure & Function Signatures

To call procedures correctly, you need to know their parameter names, types and positions. And for YIELDing their results, you have to know the output column names and types.

INFO: The signatures are shown in error messages, if you use a procedure incorrectly.

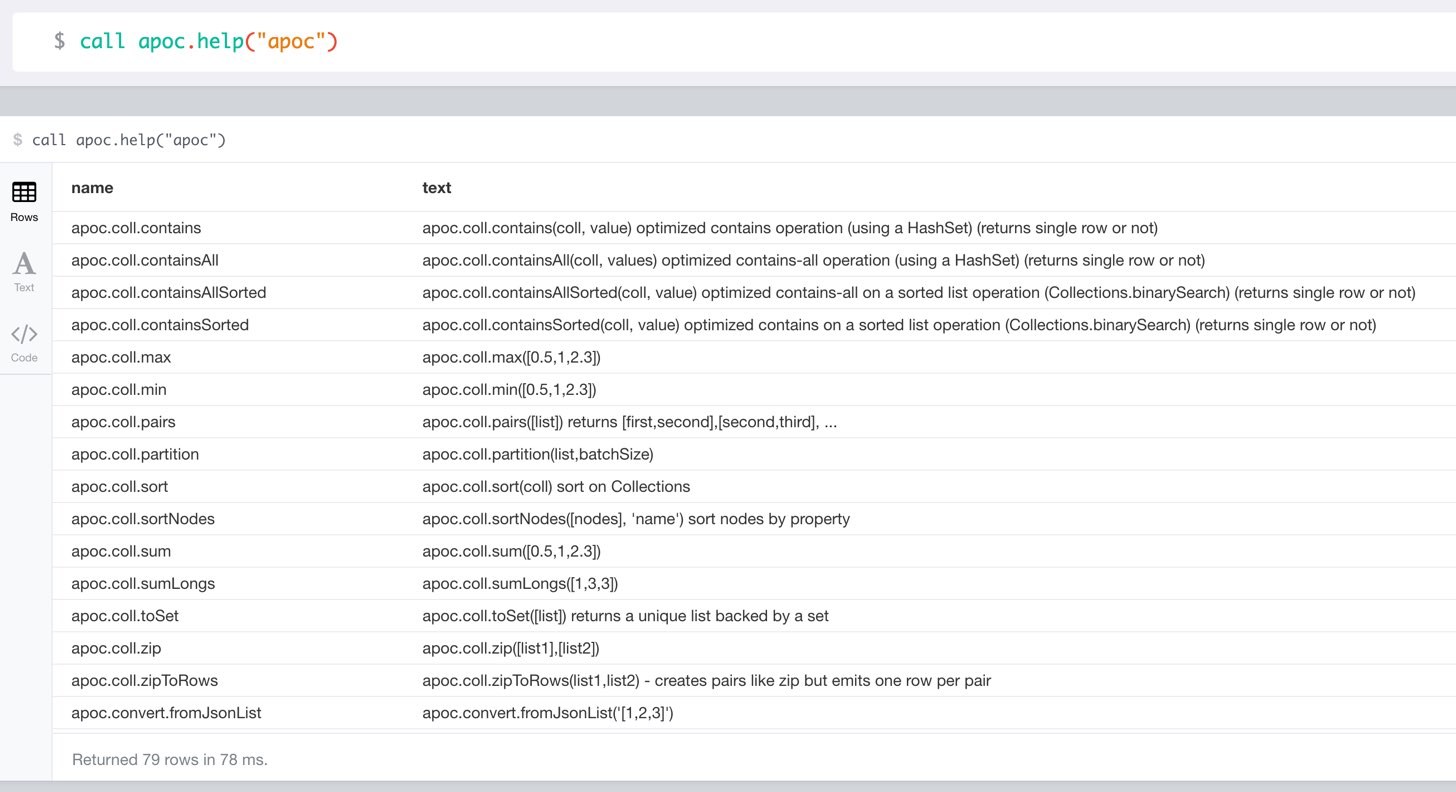

You can see the procedures signature in the output of CALL apoc.help("name") (which itself uses dbms.procedures() and dbms.functions())

CALL apoc.help("dijkstra")The signature is always name : : TYPE, so in this case:

apoc.algo.dijkstra (startNode :: NODE?, endNode :: NODE?, relationshipTypesAndDirections :: STRING?, weightPropertyName :: STRING?) :: (path :: PATH?, weight :: FLOAT?)

| Name | Type |

|---|---|

Procedure Parameters |

|

|

|

|

|

|

|

|

|

Output Return Columns |

|

|

|

|

|

Help and Usage

|

lists name, description-text and if the procedure performs writes, search string is checked against beginning (package) or end (name) of procedure |

CALL apoc.help("apoc") YIELD name, text

WITH * WHERE text IS null

RETURN name AS undocumentedTo generate the help output, apoc utilizes the built in dbms.procedures() and dbms.functions() utilities.

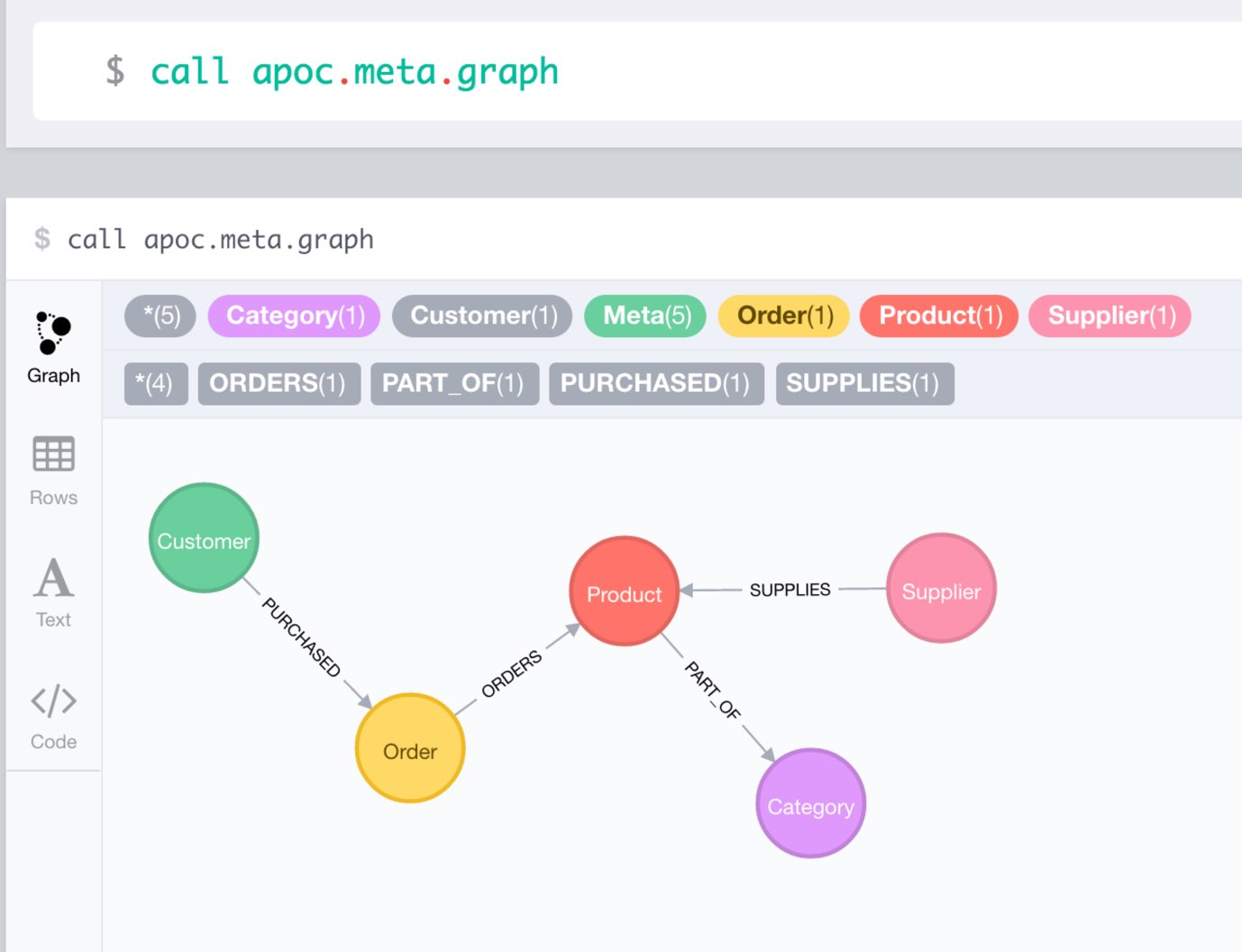

Overview of APOC Procedures & Functions

Notices

|

Warning

|

Neo4j 3.2 has increased security for procedures and functions (aka sandboxing).

Procedures that use internal APIs have to be allowed in If you want to use this via docker, you need to amend |

|

Note

|

You can also whitelist procedures and functions in general to be loaded using: Neo4j 3.2 introduces user defined aggregation functions, we will use that feature in APOC in the future, e.g. for export, graph-algorithms and more, instead of passing in Cypher statements to procedures. Please note that about 70 procedures have been turned from procedures into user defined functions.

This includes, |

User Defined Functions

Introduced in Neo4j 3.1.0-M10

Neo4j 3.1 brings some really neat improvements in Cypher alongside other cool features

If you used or wrote procedures in the past, you most probably came across instances where it felt quite unwieldy to call a procedure just to compute something, convert a value or provide a boolean decision.

For example:

CREATE (v:Value {id:{id}, data:{data}})

WITH v

CALL apoc.date.format(timestamp(), "ms") YIELD value as created

SET v.created = createdYou’d rather write it as a function:

CREATE (v:Value {id:{id}, data:{data}, created: apoc.date.format(timestamp()) })Now in 3.1 that’s possible, and you can also leave off the "ms" and use a single function name, because the unit and format parameters have a default value.

Functions are more limited than procedures: they can’t execute writes or schema operations and are expected to return a single value, not a stream of values. But this makes it also easier to write and use them.

By having information about their types, the Cypher Compiler can also check for applicability.

The signature of the procedure above changed from:

@Procedure("apoc.date.format")

public Stream<StringResult> formatDefault(@Name("time") long time, @Name("unit") String unit) {

return Stream.of(format(time, unit, DEFAULT_FORMAT));

}to the much simpler function signature (ignoring the parameter name and value annotations):

@UserFunction("apoc.date.format")

public String format(@Name("time") long time,

@Name(value="unit", defaultValue="ms") String unit,

@Name(value="format", defaultValue=DEFAULT_FORMAT) String format) {

return getFormatter().format(time, unit, format);

}This can then be called in the manner outlined above.

In our APOC procedure library we already converted about 50 procedures into functions from the following areas:

| package | # of functions | example function |

|---|---|---|

date & time conversion |

3 |

|

number conversion |

3 |

|

general type conversion |

8 |

|

type information and checking |

4 |

|

collection and map functions |

25 |

|

JSON conversion |

4 |

|

string functions |

7 |

|

hash functions |

2 |

|

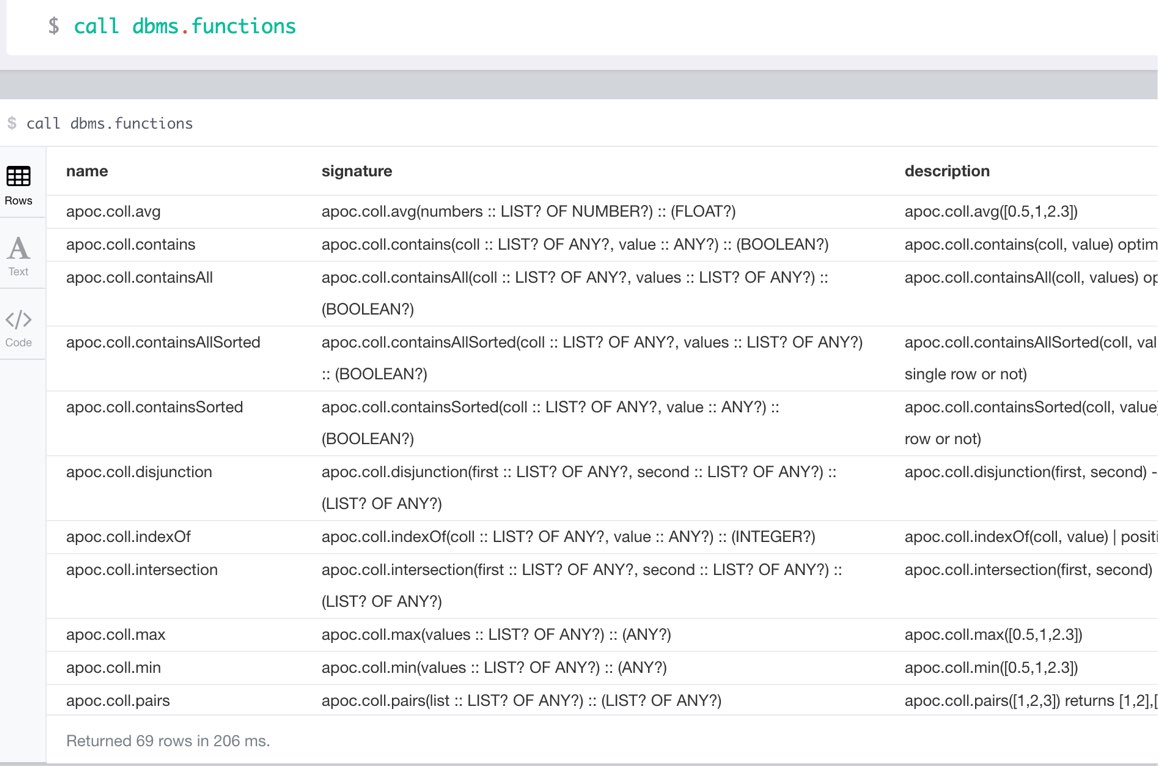

You can list user defined functions with call dbms.functions()

Text and Lookup Indexes

Index Queries

Procedures to add to and query manual indexes

|

Note

|

Please note that there are (case-sensitive) automatic schema indexes, for equality, non-equality, existence, range queries, starts with, ends-with and contains! |

| type | qualified name | description |

|---|---|---|

procedure |

|

apoc.index.addAllNodes('name',{label1:['prop1',…],…}, {options}) YIELD type, name, config - create a free text search index |

procedure |

|

apoc.index.addAllNodesExtended('name',{label1:['prop1',…],…}, {options}) YIELD type, name, config - create a free text search index with special options |

procedure |

|

apoc.index.search('name', 'query', [maxNumberOfResults]) YIELD node, weight - search for nodes in the free text index matching the given query |

procedure |

|

apoc.index.relatedNodes([nodes],label,key,'<TYPE'/'TYPE>'/'TYPE',limit) yield node - schema range scan which keeps index order and adds limit and checks opposite node of relationship against the given set of nodes |

procedure |

|

just use a cypher query with a range predicate on an indexed field and wait for index backed order by in 3.5 |

procedure |

|

just use a cypher query with a range predicate on an indexed field and wait for index backed order by in 3.5 |

procedure |

|

apoc.schema.properties.distinct(label, key) - quickly returns all distinct values for a given key |

procedure |

|

apoc.schema.properties.distinctCount([label], [key]) YIELD label, key, value, count - quickly returns all distinct values and counts for a given key |

procedure |

|

apoc.index.nodes('Label','prop:value*') YIELD node - lucene query on node index with the given label name |

procedure |

|

apoc.index.forNodes('name',{config}) YIELD type,name,config - gets or creates node index |

procedure |

|

apoc.index.forRelationships('name',{config}) YIELD type,name,config - gets or creates relationship index |

procedure |

|

apoc.index.remove('name') YIELD type,name,config - removes an manual index |

procedure |

|

apoc.index.list() - YIELD type,name,config - lists all manual indexes |

procedure |

|

apoc.index.relationships('TYPE','prop:value*') YIELD rel - lucene query on relationship index with the given type name |

procedure |

|

apoc.index.between(node1,'TYPE',node2,'prop:value*') YIELD rel - lucene query on relationship index with the given type name bound by either or both sides (each node parameter can be null) |

procedure |

|

out(node,'TYPE','prop:value*') YIELD node - lucene query on relationship index with the given type name for outgoing relationship of the given node, returns end-nodes |

procedure |

|

apoc.index.in(node,'TYPE','prop:value*') YIELD node lucene query on relationship index with the given type name for incoming relationship of the given node, returns start-nodes |

procedure |

|

apoc.index.addNode(node,['prop1',…]) add node to an index for each label it has |

procedure |

|

apoc.index.addNodeMap(node,{key:value}) add node to an index for each label it has with the given attributes which can also be computed |

procedure |

|

apoc.index.addNodeMapByName(index, node,{key:value}) add node to an index for each label it has with the given attributes which can also be computed |

procedure |

|

apoc.index.addNodeByLabel(node,'Label',['prop1',…]) add node to an index for the given label |

procedure |

|

apoc.index.addNodeByName('name',node,['prop1',…]) add node to an index for the given name |

procedure |

|

apoc.index.addRelationship(rel,['prop1',…]) add relationship to an index for its type |

procedure |

|

apoc.index.addRelationshipMap(rel,{key:value}) add relationship to an index for its type indexing the given document which can be computed |

procedure |

|

apoc.index.addRelationshipMapByName(index, rel,{key:value}) add relationship to an index for its type indexing the given document which can be computed |

procedure |

|

apoc.index.addRelationshipByName('name',rel,['prop1',…]) add relationship to an index for the given name |

procedure |

|

apoc.index.removeNodeByName('name',node) remove node from an index for the given name |

procedure |

|

apoc.index.removeRelationshipByName('name',rel) remove relationship from an index for the given name |

function |

|

apoc.index.nodes.count('Label','prop:value*') YIELD value - lucene query on node index with the given label name |

function |

|

apoc.index.relationships.count('Type','prop:value*') YIELD value - lucene query on relationship index with the given type name |

function |

|

apoc.index.between.count(node1,'TYPE',node2,'prop:value*') YIELD value - lucene query on relationship index with the given type name bound by either or both sides (each node parameter can be null) |

function |

|

apoc.index.out.count(node,'TYPE','prop:value*') YIELD value - lucene query on relationship index with the given type name for outgoing relationship of the given node, returns count-end-nodes |

function |

|

apoc.index.in.count(node1,'TYPE',node2,'prop:value*') YIELD value - lucene query on relationship index with the given type name for incoming relationship of the given node, returns count-start-nodes |

Index Management

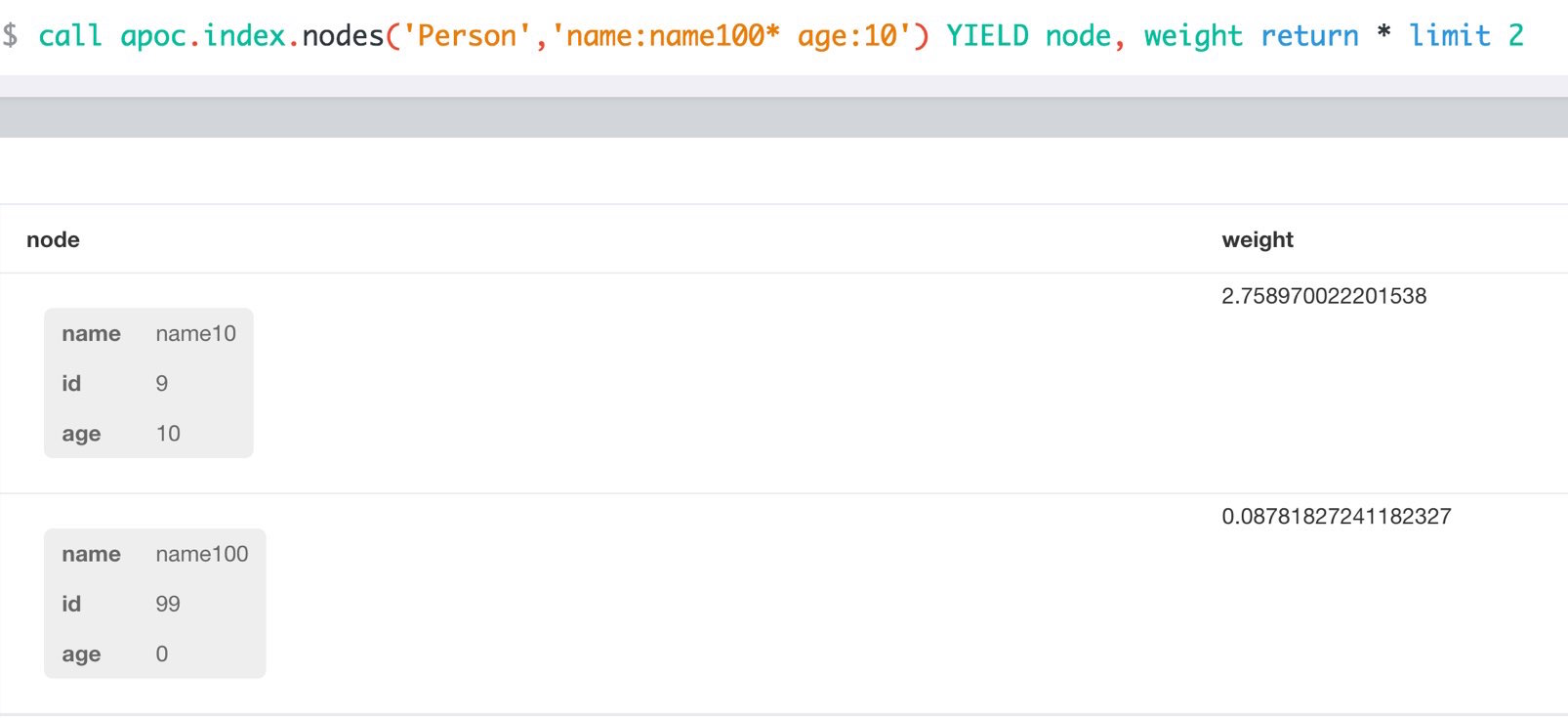

match (p:Person) call apoc.index.addNode(p,["name","age"]) RETURN count(*);

// 129s for 1M People

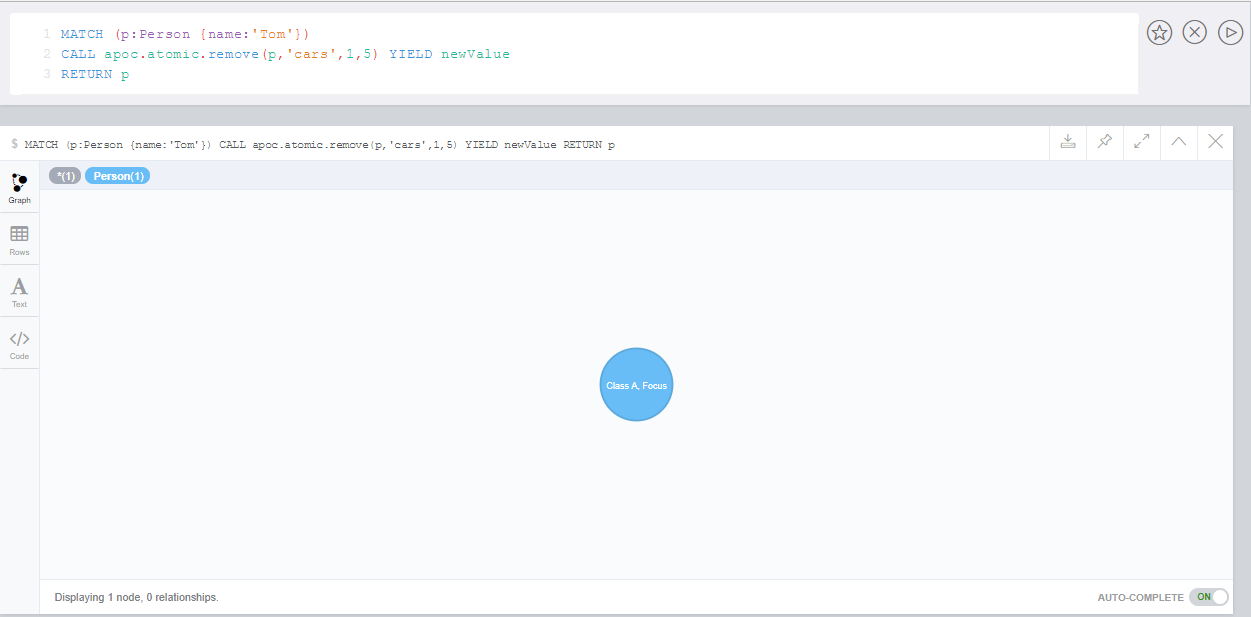

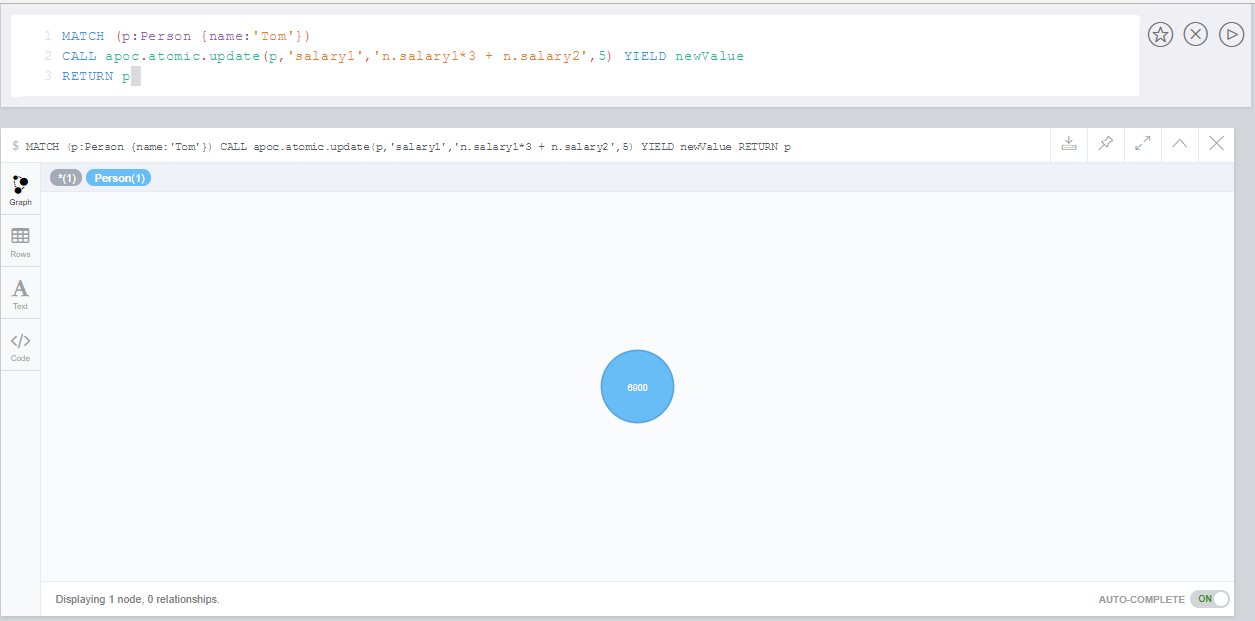

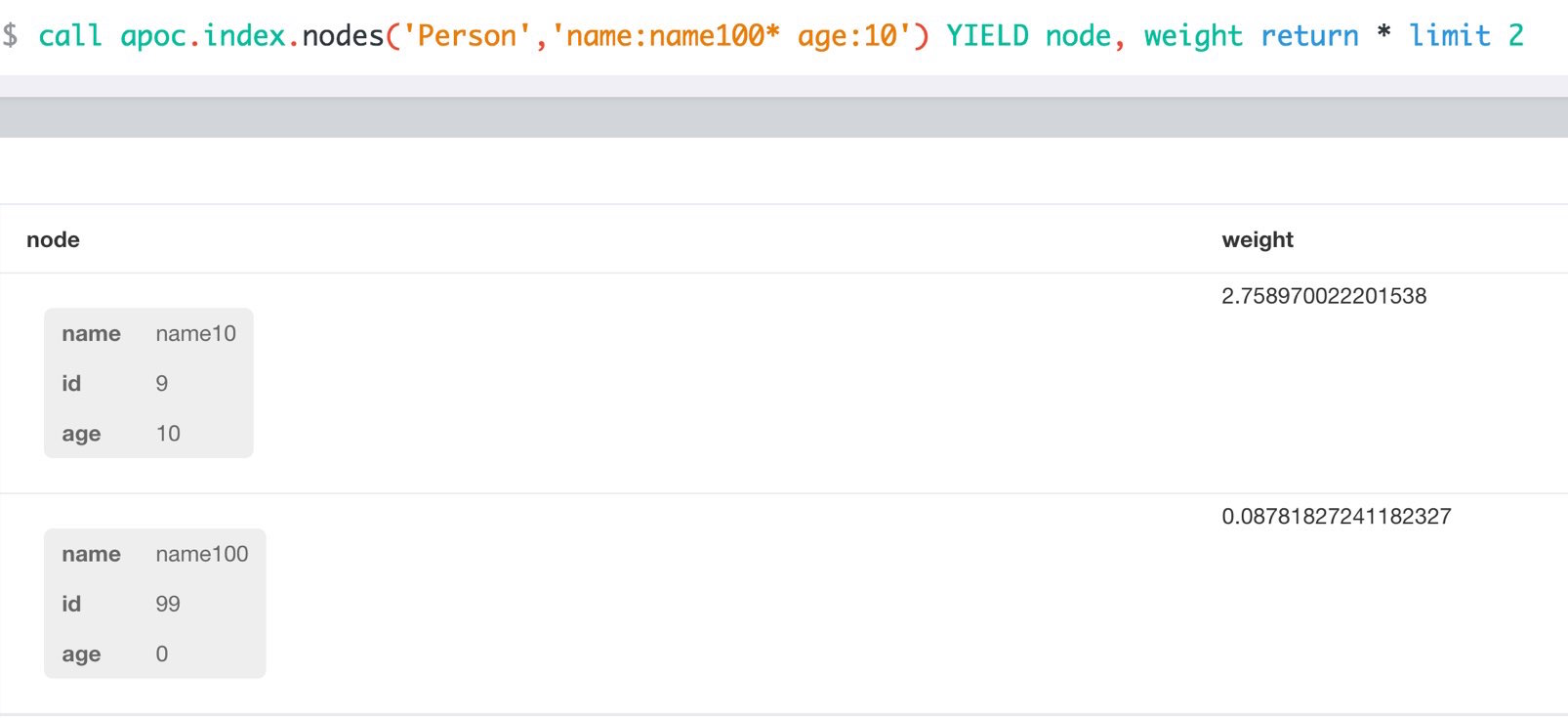

call apoc.index.nodes('Person','name:name100*') YIELD node, weight return * limit 2Manual Indexes

Data Used

The below examples use flight data.

Here is a sample subset of the data that can be load to try the procedures:

CREATE (slc:Airport {abbr:'SLC', id:14869, name:'SALT LAKE CITY INTERNATIONAL'})

CREATE (oak:Airport {abbr:'OAK', id:13796, name:'METROPOLITAN OAKLAND INTERNATIONAL'})

CREATE (bur:Airport {abbr:'BUR', id:10800, name:'BOB HOPE'})

CREATE (f2:Flight {flight_num:6147, day:2, month:1, weekday:6, year:2016})

CREATE (f9:Flight {flight_num:6147, day:9, month:1, weekday:6, year:2016})

CREATE (f16:Flight {flight_num:6147, day:16, month:1, weekday:6, year:2016})

CREATE (f23:Flight {flight_num:6147, day:23, month:1, weekday:6, year:2016})

CREATE (f30:Flight {flight_num:6147, day:30, month:1, weekday:6, year:2016})

CREATE (f2)-[:DESTINATION {arr_delay:-13, taxi_time:9}]->(oak)

CREATE (f9)-[:DESTINATION {arr_delay:-8, taxi_time:4}]->(bur)

CREATE (f16)-[:DESTINATION {arr_delay:-30, taxi_time:4}]->(slc)

CREATE (f23)-[:DESTINATION {arr_delay:-21, taxi_time:3}]->(slc)

CREATE (f30)-[:DESTINATION]->(slc)Using Manual Index on Node Properties

In order to create manual index on a node property, you call apoc.index.addNode with the node, providing the properties to be indexed.

MATCH (a:Airport)

CALL apoc.index.addNode(a,['name'])

RETURN count(*)The statement will create the node index with the same name as the Label name(s) of the node in this case Airport and add the node by their properties to the index.

Once this has been added check if the node index exists using apoc.index.list.

CALL apoc.index.list()Usually apoc.index.addNode would be used as part of node-creation, e.g. during LOAD CSV.

There is also apoc.index.addNodes for adding a list of multiple nodes at once.

Once the node index is created we can start using it.

Here are some examples:

The apoc.index.nodes finds nodes in a manual index using the given lucene query.

|

Note

|

That makes only sense if you combine multiple properties in one lookup or use case insensitive or fuzzy matching full-text queries. In all other cases the built in schema indexes should be used. |

CALL apoc.index.nodes('Airport','name:inter*') YIELD node AS airport, weight

RETURN airport.name, weight

LIMIT 10|

Note

|

Apoc index queries not only return nodes and relationships but also a weight, which is the score returned from the underlying Lucene index. The results are also sorted by that score. That’s especially helpful for partial and fuzzy text searches. |

To remove the node index Airport created, use:

CALL apoc.index.remove('Airport')Add "document" to index

Instead of the key-value pairs of a node or relationship properties, you can also compute a map containing information and add that to the index. So you could find a node or relationship by information from it’s neighbours or relationships.

CREATE (company:Company {name:'Neo4j,Inc.'})

CREATE (company)<-[:WORKS_AT {since:2013}]-(:Employee {name:'Mark'})

CREATE (company)<-[:WORKS_AT {since:2014}]-(:Employee {name:'Martin'})MATCH (company:Company)<-[worksAt:WORKS_AT]-(employee)

WITH company, { name: company.name, employees:collect(employee.name),startDates:collect(worksAt.since)} as data

CALL apoc.index.addNodeMap(company, data)

RETURN count(*)These could be example searches that all return the same result node.

CALL apoc.index.nodes('Company','name:Ne* AND employees:Ma*')CALL apoc.index.nodes('Company','employees:Ma*')CALL apoc.index.nodes('Company','startDates:[2013 TO 2014]')Using Manual Index on Relationship Properties

The procedure apoc.index.addRelationship is used to create a manual index on relationship properties.

As there are no schema indexes for relationships, these manual indexes can be quite useful.

MATCH (:Flight)-[r:DESTINATION]->(:Airport)

CALL apoc.index.addRelationship(r,['taxi_time'])

RETURN count(*)The statement will create the relationship index with the same name as relationship-type, in this case DESTINATION and add the relationship by its properties to the index.

Using apoc.index.relationships, we can find the relationship of type DESTINATION with the property taxi_time of 11 minutes.

We can chose to also return the start and end-node.

CALL apoc.index.relationships('DESTINATION','taxi_time:11') YIELD rel, start AS flight, end AS airport

RETURN flight_num.flight_num, airport.name;|

Note

|

Manual relationship indexed do not only store the relationship by its properties but also the start- and end-node. |

That’s why we can use that information to subselect relationships not only by property but also by those nodes, which is quite powerful.

With apoc.index.in we can pin the node with incoming relationships (end-node) to get the start nodes for all the DESTINATION relationships.

For instance to find all flights arriving in 'SALT LAKE CITY INTERNATIONAL' with a taxi_time of 7 minutes we’d use:

MATCH (a:Airport {name:'SALT LAKE CITY INTERNATIONAL'})

CALL apoc.index.in(a,'DESTINATION','taxi_time:7') YIELD node AS flight

RETURN flightThe opposite is apoc.index.out, which takes and binds end-nodes and returns start-nodes of relationships.

Really useful to quickly find a subset of relationships between nodes with many relationships (tens of thousands to millions) is apoc.index.between.

Here you bind both the start and end-node and provide (or not) properties of the relationships.

MATCH (f:Flight {flight_num:6147})

MATCH (a:Airport {name:'SALT LAKE CITY INTERNATIONAL'})

CALL apoc.index.between(f,'DESTINATION',a,'taxi_time:7') YIELD rel, weight

RETURN *To remove the relationship index DESTINATION that was created, use.

CALL apoc.index.remove('DESTINATION')Full Text Search

Indexes are used for finding nodes in the graph that further operations can then continue from. Just like in a book where you look at the index to find a section that interest you, and then start reading from there. A full text index allows you to find occurrences of individual words or phrases across all attributes.

In order to use the full text search feature, we have to first index our data by specifying all the attributes we want to index.

Here we create a full text index called “locations” (we will use this name when searching in the index) with our data.

|

Note

|

by default these fulltext indexes do not automatically track changes you perform in your graph. See …. for how to enabled automatic index tracking. |

CALL apoc.index.addAllNodes('locations',{

Company: ["name", "description"],

Person: ["name","address"],

Address: ["address"]})Creating the index will take a little while since the procedure has to read through the entire database to create the index.

We can now use this index to search for nodes in the database. The most simple case would be to search across all data for a particular word.

It does not matter which property that word exists in, any node that has that word in any of its indexed properties will be found.

If you use a name in the call, all occurrences will be found (but limited to 100 results).

CALL apoc.index.search("locations", 'name')Advanced Search

We can further restrict our search to only searching in a particular attribute.

In order to search for a Person with an address in France, we use the following.

CALL apoc.index.search("locations", "Person.address:France")Now we can search for nodes with a specific property value, and then explore their neighbourhoods visually.

But integrating it with an graph query is so much more powerful.

Fulltext and Graph Search

We could for instance search for addresses in the database that contain the word "Paris", and then find all companies registered at those addresses:

CALL apoc.index.search("locations", "Address.address:Paris~") YIELD node AS addr

MATCH (addr)<-[:HAS_ADDRESS]-(company:Company)

RETURN company LIMIT 50The tilde (~) instructs the index search procedure to do a fuzzy match, allowing us to find "Paris" even if the spelling is slightly off.

We might notice that there are addresses that contain the word “Paris” that are not in Paris, France. For example there might be a Paris Street somewhere.

We can further specify that we want the text to contain both the word Paris, and the word France:

CALL apoc.index.search("locations", "+Address.address:Paris~ +France~")

YIELD node AS addr

MATCH (addr)<-[:HAS_ADDRESS]-(company:Company)

RETURN company LIMIT 50Complex Searches

Things start to get interesting when we look at how the different entities in Paris are connected to one another. We can do that by finding all the entities with addresses in Paris, then creating all pairs of such entities and finding the shortest path between each such pair:

CALL apoc.index.search("locations", "+Address.address:Paris~ +France~") YIELD node AS addr

MATCH (addr)<-[:HAS_ADDRESS]-(company:Company)

WITH collect(company) AS companies

// create unique pairs

UNWIND companies AS x UNWIND companies AS y

WITH x, y WHERE ID(x) < ID(y)

MATCH path = shortestPath((x)-[*..10]-(y))

RETURN pathFor more details on the query syntax used in the second parameter of the search procedure,

please see this Lucene query tutorial

Index Configuration

apoc.index.addAllNodes(<name>, <labelPropsMap>, <option>) allows to fine tune your indexes using the options parameter defaulting to an empty map.

All standard options for Neo4j manual indexes are allowed plus apoc specific options:

| name | value | description |

|---|---|---|

|

|

type of the index |

|

|

if terms should be converted to lower case before indexing |

|

|

classname of lucene analyzer to be used for this index |

|

|

classname for lucene similarity to be used for this index |

|

|

if this index should be tracked for graph updates |

|

Note

|

An index configuration cannot be changed once the index is created.

However subsequent invocations of apoc.index.addAllNodes will delete the index if existing and create it afterwards.

|

Automatic Index Tracking for Manual Indexes

As mentioned above, apoc.index.addAllNodes() populates an fulltext index.

But it does not track changes being made to the graph and reflect these changes to the index.

You would have to rebuild that index regularly yourself.

Or alternatively use the automatic index tracking, that keeps the index in sync with your graph changes. To enable this feature a two step configuration approach is required.

|

Note

|

Please note that there is a performance impact if you enable automatic index tracking. |

neo4j.conf setapoc.autoIndex.enabled=trueThis global setting will initialize a transaction event handler to take care of reflecting changes of any added nodes, deleted nodes, changed properties to the indexes.

In addition to enable index tracking globally using apoc.autoIndex.enabled each individual index must be configured as "trackable" by setting autoUpdate:true in the options when initially creating an index:

CALL apoc.index.addAllNodes('locations',{

Company: ["name", "description"],

Person: ["name","address"],

Address: ["address"]}, {autoUpdate:true})By default index tracking is done synchronously. That means updates to fulltext indexes are part of same transaction as the originating change (e.g. changing a node property). While this guarantees instant consistency it has an impact on performance.

Alternatively, you can decide to perform index updates asynchronously in a separate thread by setting this flag in neo4j.conf

apoc.autoIndex.async=trueWith this setting enabled, index updates are fed to a buffer queue that is consumed asynchronously using transaction batches. The batching can be further configured using

apoc.autoIndex.queue_capacity=100000

apoc.autoIndex.async_rollover_opscount=50000

apoc.autoIndex.async_rollover_millis=5000

apoc.autoIndex.tx_handler_stopwatch=falseThe values above are the default setting. In this example the index updates are consumed in transactions of maximum 50000 operations or 5000 milliseconds - whichever triggers first will cause the index update transaction to be committed and rolled over.

If apoc.autoIndex.tx_handler_stopwatch is enabled, the time spent in beforeCommit and afterCommit is traced to debug.log.

Use this setting only for diagnosis.

A Worked Example on Fulltext Index Tracking

This section provides a small but still usable example to understand automatic index updates.

Make sure apoc.autoIndex.enabled=true is set.

First we create some nodes - note there’s no index yet.

UNWIND ["Johnny Walker", "Jim Beam", "Jack Daniels"] as name CREATE (:Person{name:name})Now we index them:

CALL apoc.index.addAllNodes('people', { Person:["name"]}, {autoUpdate:true})Check if we can find "Johnny" - we expect one result.

CALL apoc.index.search("people", "Johnny") YIELD node, weight

RETURN node.name, weightAdding some more people - note, we have another "Johnny":

UNWIND ["Johnny Rotten", "Axel Rose"] as name CREATE (:Person{name:name})Again we’re search for "Johnny", expecting now two of them:

CALL apoc.index.search("people", "Johnny") YIELD node, weight

RETURN node.name, weightUtility Functions

Phonetic Text Procedures

The phonetic text (soundex) procedures allow you to compute the soundex encoding of a given string. There is also a procedure to compare how similar two strings sound under the soundex algorithm. All soundex procedures by default assume the used language is US English.

CALL apoc.text.phonetic('Hello, dear User!') YIELD value

RETURN value // will return 'H436'CALL apoc.text.phoneticDelta('Hello Mr Rabbit', 'Hello Mr Ribbit') // will return '4' (very similar)Extract Domain

The User Function apoc.data.domain will take a url or email address and try to determine the domain name.

This can be useful to make easier correlations and equality tests between differently formatted email addresses, and between urls to the same domains but specifying different locations.

WITH 'foo@bar.com' AS email

RETURN apoc.data.domain(email) // will return 'bar.com'WITH 'http://www.example.com/all-the-things' AS url

RETURN apoc.data.domain(url) // will return 'www.example.com'TimeToLive (TTL) - Expire Nodes

Enable cleanup of expired nodes in neo4j.conf with apoc.ttl.enabled=true

30s after startup an index is created:

CREATE INDEX ON :TTL(ttl)At startup a statement is scheduled to run every 60s (or configure in neo4j.conf - apoc.ttl.schedule=120)

MATCH (t:TTL) where t.ttl < timestamp() WITH t LIMIT 1000 DETACH DELETE tThe ttl property holds the time when the node is expired in milliseconds since epoch.

You can expire your nodes by setting the :TTL label and the ttl property:

MATCH (n:Foo) WHERE n.bar SET n:TTL, n.ttl = timestamp() + 10000;There is also a procedure that does the same:

CALL apoc.date.expire(node,time,'time-unit');

CALL apoc.date.expire(n,100,'s');Date and Time Conversions

(thanks @tkroman)

Conversion between formatted dates and timestamps

-

apoc.date.parse('2015/03/25 03-15-59',['s'],['yyyy/MM/dd HH/mm/ss'])same as previous, but accepts custom datetime format -

apoc.date.format(12345,['s'], ['yyyy/MM/dd HH/mm/ss'])the same as previous, but accepts custom datetime format -

possible unit values:

ms,s,m,h,dand their long forms. -

possible time zone values: Either an abbreviation such as

PST, a full name such asAmerica/Los_Angeles, or a custom ID such asGMT-8:00. Full names are recommended.

Conversion of timestamps between different time units

-

apoc.date.convert(12345, 'ms', 'd')convert a timestamp in one time unit into one of a different time unit -

possible unit values:

ms,s,m,h,dand their long forms.

Adding/subtracting time unit values to timestamps

-

apoc.date.add(12345, 'ms', -365, 'd')given a timestamp in one time unit, adds a value of the specified time unit -

possible unit values:

ms,s,m,h,dand their long forms.

Current timestamp

apoc.date.currentTimestamp() provides the System.currentTimeMillis which is current throughout transaction execution compared to Cypher’s timestamp() function which does not update within a transaction

Reading separate datetime fields:

Splits date (optionally, using given custom format) into fields returning a map from field name to its value.

RETURN apoc.date.fields('2015-03-25 03:15:59')Following fields are supported:

| Result field | Represents |

|---|---|

'years' |

year |

'months' |

month of year |

'days' |

day of month |

'hours' |

hour of day |

'minutes' |

minute of hour |

'seconds' |

second of minute |

'zone' |

Examples

RETURN apoc.date.fields('2015-01-02 03:04:05 EET', 'yyyy-MM-dd HH:mm:ss zzz') {

'weekdays': 5,

'years': 2015,

'seconds': 5,

'zoneid': 'EET',

'minutes': 4,

'hours': 3,

'months': 1,

'days': 2

}

RETURN apoc.date.fields('2015/01/02_EET', 'yyyy/MM/dd_z') {

'weekdays': 5,

'years': 2015,

'zoneid': 'EET',

'months': 1,

'days': 2

}

Notes on formats:

-

the default format is

yyyy-MM-dd HH:mm:ss -

if the format pattern doesn’t specify timezone, formatter considers dates to belong to the UTC timezone

-

if the timezone pattern is specified, the timezone is extracted from the date string, otherwise an error will be reported

-

the

to/fromSecondstimestamp values are in POSIX (Unix time) system, i.e. timestamps represent the number of seconds elapsed since 00:00:00 UTC, Thursday, 1 January 1970 -

the full list of supported formats is described in SimpleDateFormat JavaDoc

Reading single datetime field from UTC Epoch:

Extracts the value of one field from a datetime epoch.

RETURN apoc.date.field(12345)Following fields are supported:

| Result field | Represents |

|---|---|

'years' |

year |

'months' |

month of year |

'days' |

day of month |

'hours' |

hour of day |

'minutes' |

minute of hour |

'seconds' |

second of minute |

'millis' |

milliseconds of a second |

Number Format Conversions

Conversion between formatted decimals

-

apoc.number.format(number)format a long or double using the default system pattern and language to produce a string -

apoc.number.format(number, pattern)format a long or double using a pattern and the default system language to produce a string -

apoc.number.format(number, lang)format a long or double using the default system pattern pattern and a language to produce a string -

apoc.number.format(number, pattern, lang)format a long or double using a pattern and a language to produce a string -

apoc.number.parseInt(text)parse a text using the default system pattern and language to produce a long -

apoc.number.parseInt(text, pattern)parse a text using a pattern and the default system language to produce a long -

apoc.number.parseInt(text, '', lang)parse a text using the default system pattern and a language to produce a long -

apoc.number.parseInt(text, pattern, lang)parse a text using a pattern and a language to produce a long -

apoc.number.parseFloat(text)parse a text using the default system pattern and language to produce a double -

apoc.number.parseFloat(text, pattern)parse a text using a pattern and the default system language to produce a double -

apoc.number.parseFloat(text,'',lang)parse a text using the default system pattern and a language to produce a double -

apoc.number.parseFloat(text, pattern, lang)parse a text using a pattern and a language to produce a double -

The full list of supported values for

patternandlangparams is described in DecimalFormat JavaDoc

Examples

return apoc.number.format(12345.67) as value ╒═════════╕ │value │ ╞═════════╡ │12,345.67│ └─────────┘

return apoc.number.format(12345, '#,##0.00;(#,##0.00)', 'it') as value ╒═════════╕ │value │ ╞═════════╡ │12.345,00│ └─────────

return apoc.number.format(12345.67, '#,##0.00;(#,##0.00)', 'it') as value ╒═════════╕ │value │ ╞═════════╡ │12.345,67│ └─────────┘

return apoc.number.parseInt('12.345', '#,##0.00;(#,##0.00)', 'it') as value

╒═════╕

│value│

╞═════╡

│12345│

└─────┘

return apoc.number.parseFloat('12.345,67', '#,##0.00;(#,##0.00)', 'it') as value

╒════════╕

│value │

╞════════╡

│12345.67│

└────────┘

return apoc.number.format('aaa') as value

null beacuse 'aaa' isn't a number

RETURN apoc.number.parseInt('aaa')

Return null because 'aaa' is unparsable.

Exact

Handle BigInteger And BigDecimal

| Statement | Description | Return type |

|---|---|---|

RETURN apoc.number.exact.add(stringA,stringB) |

return the sum’s result of two large numbers |

string |

RETURN apoc.number.exact.sub(stringA,stringB) |

return the substraction’s of two large numbers |

string |

RETURN apoc.number.exact.mul(stringA,stringB,[prec],[roundingModel] |

return the multiplication’s result of two large numbers |

string |

RETURN apoc.number.exact.div(stringA,stringB,[prec],[roundingModel]) |

return the division’s result of two large numbers |

string |

RETURN apoc.number.exact.toInteger(string,[prec],[roundingMode]) |

return the Integer value of a large number |

Integer |

RETURN apoc.number.exact.toFloat(string,[prec],[roundingMode]) |

return the Float value of a large number |

Float |

RETURN apoc.number.exact.toExact(number) |

return the exact value |

Integer |

-

Possible 'roundingModel' options are

UP,DOWN,CEILING,FLOOR,HALF_UP,HALF_DOWN,HALF_EVEN,UNNECESSARY

The prec parameter let us to set the precision of the operation result.

The default value is 0 (unlimited precision arithmetic) while for 'roundingModel' the default value is HALF_UP. For other information abouth prec and roundingModel see the documentation of MathContext

For example if we set as prec 2:

return apoc.number.exact.div('5555.5555','5', 2, 'HALF_DOWN') as value

╒═════════╕

│value │

╞═════════╡

│ 1100 │

└─────────┘

As a result we have only the first two digits precise. If we set 8 we have all the result precise

return apoc.number.exact.div('5555.5555','5', 8, 'HALF_DOWN') as value

╒═════════╕

│value │

╞═════════╡

│1111.1111│

└─────────┘

All the functions accept as input the scientific notation as 1E6, for example:

return apoc.number.exact.add('1E6','1E6') as value

╒═════════╕

│value │

╞═════════╡

│ 2000000 │

└─────────┘

For other information see the documentation about BigDecimal and BigInteger

Diff

Diff is a user function to return a detailed diff between two nodes.

apoc.diff.nodes([leftNode],[rightNode])

Example

CREATE

(n:Person{name:'Steve',age:34, eyes:'blue'}),

(m:Person{name:'Jake',hair:'brown',age:34})

WITH n,m

return apoc.diff.nodes(n,m)Resulting JSON body:

{

"leftOnly": {

"eyes": "blue"

},

"inCommon": {

"age": 34

},

"different": {

"name": {

"left": "Steve",

"right": "Jake"

}

},

"rightOnly": {

"hair": "brown"

}

}Graph Algorithms

Algorithm Procedures

Community Detection via Label Propagation

APOC includes a simple procedure for label propagation. It may be used to detect communities or solve other graph partitioning problems. The following example shows how it may be used.

The example call with scan all nodes 25 times. During a scan the procedure will look at all outgoing relationships of type :X for each node n. For each of these relationships, it will compute a weight and use that as a vote for the other node’s 'partition' property value. Finally, n.partition is set to the property value that acquired the most votes.

Weights are computed by multiplying the relationship weight with the weight of the other nodes. Both weights are taken from the 'weight' property; if no such property is found, the weight is assumed to be 1.0. Similarly, if no 'weight' property key was specified, all weights are assumed to be 1.0.

CALL apoc.algo.community(25,null,'partition','X','OUTGOING','weight',10000)The second argument is a list of label names and may be used to restrict which nodes are scanned.

Expand paths

Expand from start node following the given relationships from min to max-level adhering to the label filters. Several variations exist:

apoc.path.expand() expands paths using Cypher’s default expansion modes (bfs and 'RELATIONSHIP_PATH' uniqueness)

apoc.path.expandConfig() allows more flexible configuration of parameters and expansion modes

apoc.path.subgraphNodes() expands to nodes of a subgraph

apoc.path.subgraphAll() expands to nodes of a subgraph and also returns all relationships in the subgraph

apoc.path.spanningTree() expands to paths collectively forming a spanning tree

Expand

CALL apoc.path.expand(startNode <id>|Node, relationshipFilter, labelFilter, minLevel, maxLevel )

CALL apoc.path.expand(startNode <id>|Node|list, 'TYPE|TYPE_OUT>|<TYPE_IN', '+YesLabel|-NoLabel|/TerminationLabel|>EndNodeLabel', minLevel, maxLevel ) yield pathRelationship Filter

Syntax: [<]RELATIONSHIP_TYPE1[>]|[<]RELATIONSHIP_TYPE2[>]|…

| input | type | direction |

|---|---|---|

|

|

OUTGOING |

|

|

INCOMING |

|

|

BOTH |

|

|

OUTGOING |

|

|

INCOMING |

Label Filter

Syntax: [+-/>]LABEL1|LABEL2|*|…

| input | result |

|---|---|

|

blacklist filter - No node in the path will have a label in the blacklist. |

|

whitelist filter - All nodes in the path must have a label in the whitelist (exempting termination and end nodes, if using those filters). If no whitelist operator is present, all labels are considered whitelisted. |

|

termination filter - Only return paths up to a node of the given labels, and stop further expansion beyond it. Termination nodes do not have to respect the whitelist. Termination filtering takes precedence over end node filtering. |

|

end node filter - Only return paths up to a node of the given labels, but continue expansion to match on end nodes beyond it. End nodes do not have to respect the whitelist to be returned, but expansion beyond them is only allowed if the node has a label in the whitelist. |

As of APOC 3.1.3.x multiple label filter operations are allowed.

In prior versions, only one type of operation is allowed in the label filter (+ or - or / or >, never more than one).

With APOC 3.2.x.x, label filters will no longer apply to starting nodes of the expansion by default, but this can be toggled with the filterStartNode config parameter.

With the APOC releases in January 2018, some behavior has changed in the label filters:

| filter | changed behavior |

|---|---|

|

Now indicates the label is whitelisted, same as if it were prefixed with |

|

The label is additionally whitelisted, so expansion will always continue beyond an end node (unless prevented by the blacklist).

Previously, expansion would only continue if allowed by the whitelist and not disallowed by the blacklist.

This also applies at a depth below |

|

When at depth below |

|

|

call apoc.path.expand(1,"ACTED_IN>|PRODUCED<|FOLLOWS<","+Movie|Person",0,3)

call apoc.path.expand(1,"ACTED_IN>|PRODUCED<|FOLLOWS<","-BigBrother",0,3)

call apoc.path.expand(1,"ACTED_IN>|PRODUCED<|FOLLOWS<","",0,3)

// combined with cypher:

match (tom:Person {name :"Tom Hanks"})

call apoc.path.expand(tom,"ACTED_IN>|PRODUCED<|FOLLOWS<","+Movie|Person",0,3) yield path as pp

return pp;

// or

match (p:Person) with p limit 3

call apoc.path.expand(p,"ACTED_IN>|PRODUCED<|FOLLOWS<","+Movie|Person",1,2) yield path as pp

return p, ppWe will first set a :Western label on some nodes.

match (p:Person)

where p.name in ['Clint Eastwood', 'Gene Hackman']

set p:WesternNow expand from 'Keanu Reeves' to all :Western nodes with a termination filter:

match (k:Person {name:'Keanu Reeves'})

call apoc.path.expandConfig(k, {relationshipFilter:'ACTED_IN|PRODUCED|DIRECTED', labelFilter:'/Western', uniqueness: 'NODE_GLOBAL'}) yield path

return pathThe one returned path only matches up to 'Gene Hackman'. While there is a path from 'Keanu Reeves' to 'Clint Eastwood' through 'Gene Hackman', no further expansion is permitted through a node in the termination filter.

If you didn’t want to stop expansion on reaching 'Gene Hackman', and wanted 'Clint Eastwood' returned as well, use the end node filter instead (>).

As of APOC 3.1.3.x, multiple label filter operators are allowed at the same time.

When processing the labelFilter string, once a filter operator is introduced, it remains the active filter until another filter supplants it. (Not applicable after February 2018 release, as no filter will now mean the label is whitelisted).

In the following example, :Person and :Movie labels are whitelisted, :SciFi is blacklisted, with :Western acting as an end node label, and :Romance acting as a termination label.

… labelFilter:'+Person|Movie|-SciFi|>Western|/Romance' …

The precedence of operator evaluation isn’t dependent upon their location in the labelFilter but is fixed:

Blacklist filter -, termination filter /, end node filter >, whitelist filter +.

The consequences are as follows:

-

No blacklisted label

-will ever be present in the nodes of paths returned, no matter if the same label (or another label of a node with a blacklisted label) is included in another filter list. -

If the termination filter

/or end node filter>is used, then only paths up to nodes with those labels will be returned as results. These end nodes are exempt from the whitelist filter. -

If a node is a termination node

/, no further expansion beyond the node will occur. -

If a node is an end node

>, expansion beyond that node will only occur if the end node has a label in the whitelist. This is to prevent returning paths to nodes where a node on that path violates the whitelist. (this no longer applies in releases after February 2018) -

The whitelist only applies to nodes up to but not including end nodes from the termination or end node filters. If no end node or termination node operators are present, then the whitelist applies to all nodes of the path.

-

If no whitelist operators are present in the labelFilter, this is treated as if all labels are whitelisted.

-

If

filterStartNodeis false (which will be default in APOC 3.2.x.x), then the start node is exempt from the label filter.

Sequences

Introduced in the February 2018 APOC releases, path expander procedures can expand on repeating sequences of labels, relationship types, or both.

If only using label sequences, just use the labelFilter, but use commas to separate the filtering for each step in the repeating sequence.

If only using relationship sequences, just use the relationshipFilter, but use commas to separate the filtering for each step of the repeating sequence.

If using sequences of both relationships and labels, use the sequence parameter.

| Usage | config param | description | syntax | explanation |

|---|---|---|---|---|

label sequences only |

|

Same syntax and filters, but uses commas ( |

|

Start node must be a :Post node that isn’t :Blocked, next node must be a :Reply, and the next must be an :Admin, then repeat if able. Only paths ending with the |

relationship sequences only |

|

Same syntax, but uses commas ( |

|

Expansion will first expand |

sequences of both labels and relationships |

|

A string of comma-separated alternating label and relationship filters, for each step in a repeating sequence. The sequence should begin with a label filter, and end with a relationship filter. If present, |

|

Combines the behaviors above. |

Starting the sequence at one-off from the start node

There are some uses cases where the sequence does not begin at the start node, but at one node distant.

A new config parameter, beginSequenceAtStart, can toggle this behavior.

Default value is true.

If set to false, this changes the expected values for labelFilter, relationshipFilter, and sequence as noted below:

| sequence | altered behavior | example | explanation |

|---|---|---|---|

|

The start node is not considered part of the sequence. The sequence begins one node off from the start node. |

|

The next node(s) out from the start node begins the sequence (and must be a :Post node that isn’t :Blocked), and only paths ending with |

|

The first relationship filter in the sequence string will not be considered part of the repeating sequence, and will only be used for the first relationship from the start node to the node that will be the actual start of the sequence. |

|

|

|

Combines the above two behaviors. |

|

Combines the behaviors above. |

Label filtering in sequences work together with the endNodes+terminatorNodes, though inclusion of a node must be unanimous.

Remember that filterStartNode defaults to false for APOC 3.2.x.x and newer. If you want the start node filtered according to the first step in the sequence, you may need to set this explicitly to true.

If you need to limit the number of times a sequence repeats, this can be done with the maxLevel config param (multiply the number of iterations with the size of the nodes in the sequence).

As paths are important when expanding sequences, we recommend avoiding apoc.path.subgraphNodes(), apoc.path.subgraphAll(), and apoc.path.spanningTree() when using sequences,

as the configurations that make these efficient at matching to distinct nodes may interfere with sequence pathfinding.

Expand with Config

apoc.path.expandConfig(startNode <id>Node/list, {config}) yield path expands from start nodes using the given configuration and yields the resulting paths

Takes an additional map parameter, config, to provide configuration options:

{minLevel: -1|number,

maxLevel: -1|number,

relationshipFilter: '[<]RELATIONSHIP_TYPE1[>]|[<]RELATIONSHIP_TYPE2[>], [<]RELATIONSHIP_TYPE3[>]|[<]RELATIONSHIP_TYPE4[>]',

labelFilter: '[+-/>]LABEL1|LABEL2|*,[+-/>]LABEL1|LABEL2|*,...',

uniqueness: RELATIONSHIP_PATH|NONE|NODE_GLOBAL|NODE_LEVEL|NODE_PATH|NODE_RECENT|

RELATIONSHIP_GLOBAL|RELATIONSHIP_LEVEL|RELATIONSHIP_RECENT,

bfs: true|false,

filterStartNode: true|false,

limit: -1|number,

optional: true|false,

endNodes: [nodes],

terminatorNodes: [nodes],

beginSequenceAtStart: true|false}

The config parameter filterStartNode defines whether or not the labelFilter (and sequence) applies to the start node of the expansion.

Use filterStartNode: false when you want your label filter to only apply to all other nodes in the path, ignoring the start node.

filterStartNode defaults for all path expander procedures:

| version | default |

|---|---|

>= APOC 3.2.x.x |

filterStartNode = false |

< APOC 3.2.x.x |

filterStartNode = true |

You can use the limit config parameter to limit the number of paths returned.

When using bfs:true (which is the default for all expand procedures), this has the effect of returning paths to the n nearest nodes with labels in the termination or end node filter, where n is the limit given.

The default limit value, -1, means no limit.

If you want to make sure multiple paths should never match to the same node, use expandConfig() with 'NODE_GLOBAL' uniqueness, or any expand procedure which already uses this uniqueness

(subgraphNodes(), subgraphAll(), and spanningTree()).

When optional is set to true, the path expansion is optional, much like an OPTIONAL MATCH, so a null value is yielded whenever the expansion would normally eliminate rows due to no results.

By default optional is false for all expansion procedures taking a config parameter.

Uniqueness of nodes and relationships guides the expansion and the results returned.

Uniqueness is only configurable using expandConfig().

subgraphNodes(), subgraphAll(), and spanningTree() all use 'NODE_GLOBAL' uniqueness.

| value | description |

|---|---|

|

For each returned node there’s a (relationship wise) unique path from the start node to it. This is Cypher’s default expansion mode. |

|

A node cannot be traversed more than once. This is what the legacy traversal framework does. |

|

Entities on the same level are guaranteed to be unique. |

|

For each returned node there’s a unique path from the start node to it. |

|

This is like NODE_GLOBAL, but only guarantees uniqueness among the most recent visited nodes, with a configurable count. Traversing a huge graph is quite memory intensive in that it keeps track of all the nodes it has visited. For huge graphs a traverser can hog all the memory in the JVM, causing OutOfMemoryError. Together with this Uniqueness you can supply a count, which is the number of most recent visited nodes. This can cause a node to be visited more than once, but scales infinitely. |

|

A relationship cannot be traversed more than once, whereas nodes can. |

|

Entities on the same level are guaranteed to be unique. |

|

Same as for NODE_RECENT, but for relationships. |

|

No restriction (the user will have to manage it) |

While label filters use labels to allow whitelisting, blacklisting, and restrictions on which kind of nodes can end or terminate expansion, you can also filter based upon actual nodes.

Each of these config parameter accepts a list of nodes, or a list of node ids.

| config parameter | description | added in |

|---|---|---|

|

Only these nodes can end returned paths, and expansion will continue past these nodes, if possible. |

Winter 2018 APOC releases. |

|

Only these nodes can end returned paths, and expansion won’t continue past these nodes. |

Winter 2018 APOC releases. |

|

Only these nodes are allowed in the expansion (though endNodes and terminatorNodes will also be allowed, if present). |

Spring 2018 APOC releases. |

|

None of the paths returned will include these nodes. |

Spring 2018 APOC releases. |

You can turn this cypher query:

MATCH (user:User) WHERE user.id = 460

MATCH (user)-[:RATED]->(movie)<-[:RATED]-(collab)-[:RATED]->(reco)

RETURN count(*);into this procedure call, with changed semantics for uniqueness and bfs (which is Cypher’s expand mode)

MATCH (user:User) WHERE user.id = 460

CALL apoc.path.expandConfig(user,{relationshipFilter:"RATED",minLevel:3,maxLevel:3,bfs:false,uniqueness:"NONE"}) YIELD path

RETURN count(*);Expand to nodes in a subgraph

apoc.path.subgraphNodes(startNode <id>Node/list, {maxLevel, relationshipFilter, labelFilter, bfs:true, filterStartNode:true, limit:-1, optional:false}) yield node

Expand to subgraph nodes reachable from the start node following relationships to max-level adhering to the label filters.

Accepts the same config values as in expandConfig(), though uniqueness and minLevel are not configurable.

Expand to all nodes of a connected subgraph:

MATCH (user:User) WHERE user.id = 460

CALL apoc.path.subgraphNodes(user, {}) YIELD node

RETURN node;Expand to all nodes reachable by :FRIEND relationships:

MATCH (user:User) WHERE user.id = 460

CALL apoc.path.subgraphNodes(user, {relationshipFilter:'FRIEND'}) YIELD node

RETURN node;Expand to a subgraph and return all nodes and relationships within the subgraph

apoc.path.subgraphAll(startNode <id>Node/list, {maxLevel, relationshipFilter, labelFilter, bfs:true, filterStartNode:true, limit:-1}) yield nodes, relationships

Expand to subgraph nodes reachable from the start node following relationships to max-level adhering to the label filters. Returns the collection of nodes in the subgraph, and the collection of relationships between all subgraph nodes.

Accepts the same config values as in expandConfig(), though uniqueness and minLevel are not configurable.

The optional config value isn’t needed, as empty lists are yielded if there are no results, so rows are never eliminated.

Expand to local subgraph (and all its relationships) within 4 traversals:

MATCH (user:User) WHERE user.id = 460

CALL apoc.path.subgraphAll(user, {maxLevel:4}) YIELD nodes, relationships

RETURN nodes, relationships;Expand a spanning tree

apoc.path.spanningTree(startNode <id>Node/list, {maxLevel, relationshipFilter, labelFilter, bfs:true, filterStartNode:true, limit:-1, optional:false}) yield path

Expand a spanning tree reachable from start node following relationships to max-level adhering to the label filters. The paths returned collectively form a spanning tree.

Accepts the same config values as in expandConfig(), though uniqueness and minLevel are not configurable.

Expand a spanning tree of all contiguous :User nodes:

MATCH (user:User) WHERE user.id = 460

CALL apoc.path.spanningTree(user, {labelFilter:'+User'}) YIELD path

RETURN path;Centrality Algorithms

Setup

Let’s create some test data to run the Centrality algorithms on.

// create 100 nodes

FOREACH (id IN range(0,1000) | CREATE (:Node {id:id}))

// over the cross product (1M) create 100.000 relationships

MATCH (n1:Node),(n2:Node) WITH n1,n2 LIMIT 1000000 WHERE rand() < 0.1

CREATE (n1)-[:TYPE]->(n2)Closeness Centrality Procedure

Centrality is an indicator of a node’s influence in a graph. In graphs there is a natural distance metric between pairs of nodes, defined by the length of their shortest paths. For both algorithms below we can measure based upon the direction of the relationship, whereby the 3rd argument represents the direction and can be of value BOTH, INCOMING, OUTGOING.

Closeness Centrality defines the farness of a node as the sum of its distances from all other nodes, and its closeness as the reciprocal of farness.

The more central a node is the lower its total distance from all other nodes.

Complexity: This procedure uses a BFS shortest path algorithm. With BFS the complexes becomes O(n * m)

Caution: Due to the complexity of this algorithm it is recommended to run it on only the nodes you are interested in.

MATCH (node:Node)

WHERE node.id %2 = 0

WITH collect(node) AS nodes

CALL apoc.algo.closeness(['TYPE'],nodes,'INCOMING') YIELD node, score

RETURN node, score

ORDER BY score DESCBetweenness Centrality Procedure

The procedure will compute betweenness centrality as defined by Linton C. Freeman (1977) using the algorithm by Ulrik Brandes (2001). Centrality is an indicator of a node’s influence in a graph.

Betweenness Centrality is equal to the number of shortest paths from all nodes to all others that pass through that node.

High centrality suggests a large influence on the transfer of items through the graph.

Centrality is applicable to numerous domains, including: social networks, biology, transport and scientific cooperation.

Complexity: This procedure uses a BFS shortest path algorithm. With BFS the complexes becomes O(n * m) Caution: Due to the complexity of this algorithm it is recommended to run it on only the nodes you are interested in.

MATCH (node:Node)

WHERE node.id %2 = 0

WITH collect(node) AS nodes

CALL apoc.algo.betweenness(['TYPE'],nodes,'BOTH') YIELD node, score

RETURN node, score

ORDER BY score DESCPageRank Algorithm

Setup

Let’s create some test data to run the PageRank algorithm on.

// create 100 nodes

FOREACH (id IN range(0,1000) | CREATE (:Node {id:id}))

// over the cross product (1M) create 100.000 relationships

MATCH (n1:Node),(n2:Node) WITH n1,n2 LIMIT 1000000 WHERE rand() < 0.1

CREATE (n1)-[:TYPE_1]->(n2)PageRank Procedure

PageRank is an algorithm used by Google Search to rank websites in their search engine results.

It is a way of measuring the importance of nodes in a graph.

PageRank counts the number and quality of relationships to a node to approximate the importance of that node.

PageRank assumes that more important nodes likely have more relationships.

Caution: nodes specifies the nodes for which a PageRank score will be projected, but the procedure will always compute the PageRank algorithm on the entire graph. At present, there is no way to filter/reduce the number of elements that PageRank computes over.

A future version of this procedure will provide the option of computing PageRank on a subset of the graph.

MATCH (node:Node)

WHERE node.id %2 = 0

WITH collect(node) AS nodes

// compute over relationships of all types

CALL apoc.algo.pageRank(nodes) YIELD node, score

RETURN node, score

ORDER BY score DESCMATCH (node:Node)

WHERE node.id %2 = 0

WITH collect(node) AS nodes

// only compute over relationships of types TYPE_1 or TYPE_2

CALL apoc.algo.pageRankWithConfig(nodes,{types:'TYPE_1|TYPE_2'}) YIELD node, score

RETURN node, score

ORDER BY score DESCMATCH (node:Node)

WHERE node.id %2 = 0

WITH collect(node) AS nodes

// peroform 10 page rank iterations, computing only over relationships of type TYPE_1

CALL apoc.algo.pageRankWithConfig(nodes,{iterations:10,types:'TYPE_1'}) YIELD node, score

RETURN node, score

ORDER BY score DESCSpatial

Spatial Functions

The spatial procedures are intended to enable geographic capabilities on your data.

Geocode

The first procedure geocode which will convert a textual address into a location containing latitude, longitude and description. Despite being only a single function, together with the built-in functions point and distance we can achieve quite powerful results.

First, how can we use the procedure:

CALL apoc.spatial.geocodeOnce('21 rue Paul Bellamy 44000 NANTES FRANCE') YIELD location

RETURN location.latitude, location.longitude // will return 47.2221667, -1.5566624There are three forms of the procedure:

-

geocodeOnce(address) returns zero or one result.

-

geocode(address,maxResults) returns zero, one or more up to maxResults.

-

reverseGeocode(latitude,longitude) returns zero or one result.

This is because the backing geocoding service (OSM, Google, OpenCage or other) might return multiple results for the same query. GeocodeOnce() is designed to return the first, or highest ranking result.

The third procedure reverseGeocode will convert a location containing latitude and longitude into a textual address.

CALL apoc.spatial.reverseGeocode(47.2221667,-1.5566625) YIELD location RETURN location.description; // will return 21, Rue Paul Bellamy, Talensac - Pont Morand, //Hauts-Pavés - Saint-Félix, Nantes, Loire-Atlantique, Pays de la Loire, //France métropolitaine, 44000, France

Configuring Geocode

There are a few options that can be set in the neo4j.conf file to control the service:

-

apoc.spatial.geocode.provider=osm (osm, google, opencage, etc.)

-

apoc.spatial.geocode.osm.throttle=5000 (ms to delay between queries to not overload OSM servers)

-

apoc.spatial.geocode.google.throttle=1 (ms to delay between queries to not overload Google servers)

-

apoc.spatial.geocode.google.key=xxxx (API key for google geocode access)

-

apoc.spatial.geocode.google.client=xxxx (client code for google geocode access)

-

apoc.spatial.geocode.google.signature=xxxx (client signature for google geocode access)

For google, you should use either a key or a combination of client and signature. Read more about this on the google page for geocode access at https://developers.google.com/maps/documentation/geocoding/get-api-key#key

Configuring Custom Geocode Provider

Geocode

For any provider that is not 'osm' or 'google' you get a configurable supplier that requires two additional settings, 'url' and 'key'. The 'url' must contain the two words 'PLACE' and 'KEY'. The 'KEY' will be replaced with the key you get from the provider when you register for the service. The 'PLACE' will be replaced with the address to geocode when the procedure is called.

Reverse Geocode

The 'url' must contain the three words 'LAT', 'LNG' and 'KEY'. The 'LAT' will be replaced with the latitude and 'LNG' will be replaced with the the longitude to reverse geocode when the procedure is called.

For example, to get the service working with OpenCage, perform the following steps:

-

Register your own application key at https://geocoder.opencagedata.com/

-

Once you have a key, add the following three lines to neo4j.conf

apoc.spatial.geocode.provider=opencage apoc.spatial.geocode.opencage.key=XXXXXXXXXXXXXXXXXXXXXXXXXXXXXX apoc.spatial.geocode.opencage.url=http://api.opencagedata.com/geocode/v1/json?q=PLACE&key=KEY apoc.spatial.geocode.opencage.reverse.url=http://api.opencagedata.com/geocode/v1/json?q=LAT+LNG&key=KEY

-

make sure that the 'XXXXXXX' part above is replaced with your actual key

-

Restart the Neo4j server and then test the geocode procedures to see that they work

-

If you are unsure if the provider is correctly configured try verify with:

CALL apoc.spatial.showConfig()Using Geocode within a bigger Cypher query

A more complex, or useful, example which geocodes addresses found in properties of nodes:

MATCH (a:Place)

WHERE exists(a.address)

CALL apoc.spatial.geocodeOnce(a.address) YIELD location

RETURN location.latitude AS latitude, location.longitude AS longitude, location.description AS descriptionCalculating distance between locations

If we wish to calculate the distance between addresses, we need to use the point() function to convert latitude and longitude to Cyper Point types, and then use the distance() function to calculate the distance:

WITH point({latitude: 48.8582532, longitude: 2.294287}) AS eiffel

MATCH (a:Place)

WHERE exists(a.address)

CALL apoc.spatial.geocodeOnce(a.address) YIELD location

WITH location, distance(point(location), eiffel) AS distance

WHERE distance < 5000

RETURN location.description AS description, distance

ORDER BY distance

LIMIT 100sortPathsByDistance

The second procedure enables you to sort a given collection of paths by the sum of their distance based on lat/long properties on the nodes.

Sample data :

CREATE (bruges:City {name:"bruges", latitude: 51.2605829, longitude: 3.0817189})

CREATE (brussels:City {name:"brussels", latitude: 50.854954, longitude: 4.3051786})

CREATE (paris:City {name:"paris", latitude: 48.8588376, longitude: 2.2773455})

CREATE (dresden:City {name:"dresden", latitude: 51.0767496, longitude: 13.6321595})

MERGE (bruges)-[:NEXT]->(brussels)

MERGE (brussels)-[:NEXT]->(dresden)

MERGE (brussels)-[:NEXT]->(paris)

MERGE (bruges)-[:NEXT]->(paris)

MERGE (paris)-[:NEXT]->(dresden)Finding paths and sort them by distance

MATCH (a:City {name:'bruges'}), (b:City {name:'dresden'})

MATCH p=(a)-[*]->(b)

WITH collect(p) as paths

CALL apoc.spatial.sortPathsByDistance(paths) YIELD path, distance

RETURN path, distanceGraph Refactoring

In order not to have to repeatedly geocode the same thing in multiple queries, especially if the database will be used by many people, it might be a good idea to persist the results in the database so that subsequent calls can use the saved results.

Geocode and persist the result

MATCH (a:Place)

WHERE exists(a.address) AND NOT exists(a.latitude)

WITH a LIMIT 1000

CALL apoc.spatial.geocodeOnce(a.address) YIELD location

SET a.latitude = location.latitude

SET a.longitude = location.longitudeNote that the above command only geocodes the first 1000 ‘Place’ nodes that have not already been geocoded. This query can be run multiple times until all places are geocoded. Why would we want to do this? Two good reasons:

-

The geocoding service is a public service that can throttle or blacklist sites that hit the service too heavily, so controlling how much we do is useful.

-

The transaction is updating the database, and it is wise not to update the database with too many things in the same transaction, to avoid using up too much memory. This trick will keep the memory usage very low.

Now make use of the results in distance queries

WITH point({latitude: 48.8582532, longitude: 2.294287}) AS eiffel

MATCH (a:Place)

WHERE exists(a.latitude) AND exists(a.longitude)

WITH a, distance(point(a), eiffel) AS distance

WHERE distance < 5000

RETURN a.name, distance

ORDER BY distance

LIMIT 100Combined Space and Time search

Combining spatial and date-time functions can allow for more complex queries:

WITH point({latitude: 48.8582532, longitude: 2.294287}) AS eiffel

MATCH (e:Event)

WHERE exists(e.address) AND exists(e.datetime)

CALL apoc.spatial.geocodeOnce(e.address) YIELD location

WITH e, location,

distance(point(location), eiffel) AS distance,

(apoc.date.parse('2016-06-01 00:00:00','h') - apoc.date.parse(e.datetime,'h'))/24.0 AS days_before_due

WHERE distance < 5000 AND days_before_due < 14 AND apoc.date.parse(e.datetime,'h') < apoc.date.parse('2016-06-01 00:00:00','h')

RETURN e.name AS event, e.datetime AS date,

location.description AS description, distance

ORDER BY distanceData Integration

Load JSON

Load JSON

Web APIs are a huge opportunity to access and integrate data from any sources with your graph. Most of them provide the data as JSON.

With apoc.load.json you can retrieve data from URLs and turn it into map value(s) for Cypher to consume.

Cypher is pretty good at deconstructing nested documents with dot syntax, slices, UNWIND etc. so it is easy to turn nested data into graphs.

Sources with multiple JSON objects in a stream are also supported, like the streaming Twitter format or the Yelp Kaggle dataset.

Json-Path

Most of the apoc.load.json and apoc.convert.*Json procedures and functions now accept a json-path as last argument.

The json-path uses the Java implementation by Jayway of Stefan Gössners JSON-Path

Here is some syntax, there are more examples at the links above.

$.store.book[0].title

| Operator | Description |

|---|---|

|

The root element to query. This starts all path expressions. |

|

The current node being processed by a filter predicate. |

|

Wildcard. Available anywhere a name or numeric are required. |

|

Deep scan. Available anywhere a name is required. |

|

Dot-notated child |

|

Bracket-notated child or children |

|

Array index or indexes |

|

Array slice operator |

|

Filter expression. Expression must evaluate to a boolean value. |

If used, this path is applied to the json and can be used to extract sub-documents and -values before handing the result to Cypher, resulting in shorter statements with complex nested JSON.

There is also a direct apoc.json.path(json,path) function.

Load JSON StackOverflow Example

There have been articles before about loading JSON from Web-APIs like StackOverflow.

With apoc.load.json it’s now very easy to load JSON data from any file or URL.

If the result is a JSON object is returned as a singular map. Otherwise if it was an array is turned into a stream of maps.

The URL for retrieving the last questions and answers of the neo4j tag is this:

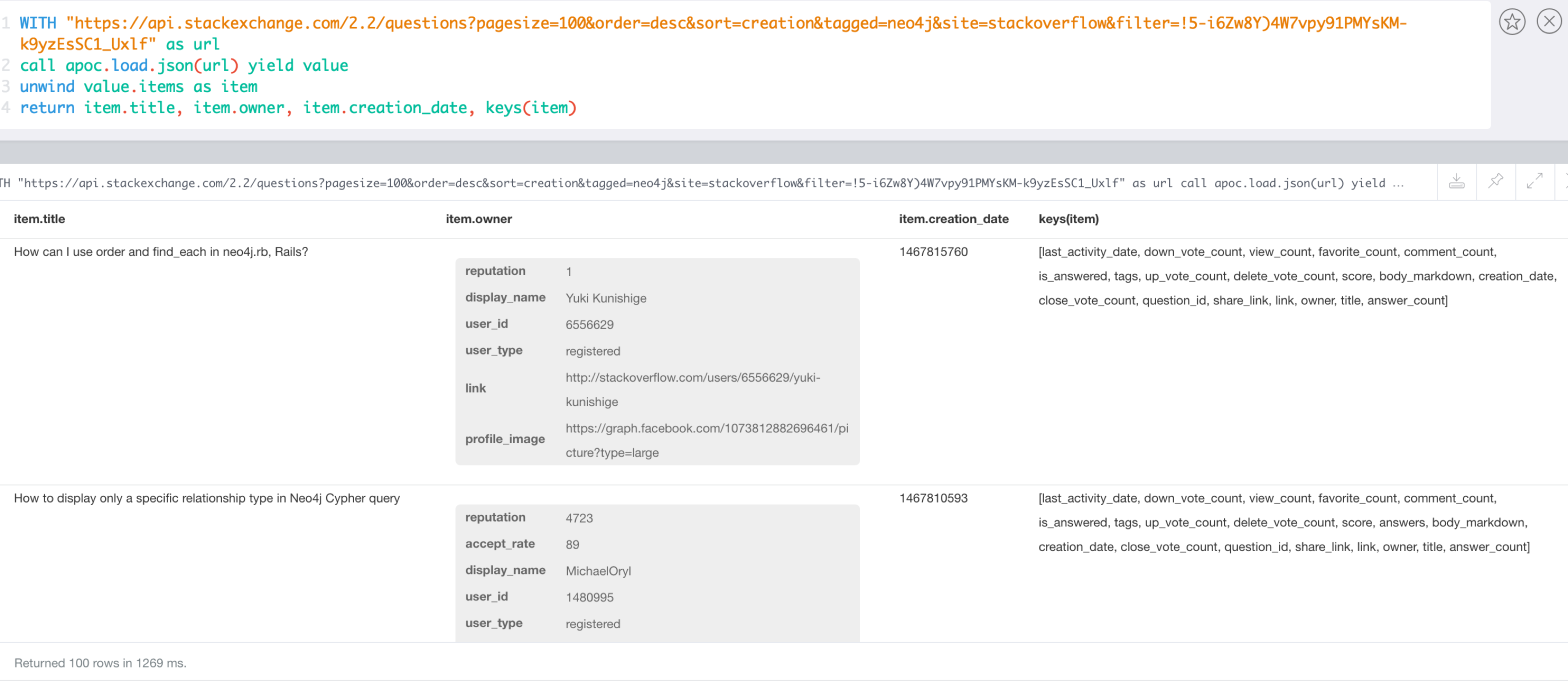

Now it can be used from within Cypher directly, let’s first introspect the data that is returned.

WITH "https://api.stackexchange.com/2.2/questions?pagesize=100&order=desc&sort=creation&tagged=neo4j&site=stackoverflow&filter=!5-i6Zw8Y)4W7vpy91PMYsKM-k9yzEsSC1_Uxlf" AS url

CALL apoc.load.json(url) YIELD value

UNWIND value.items AS item

RETURN item.title, item.owner, item.creation_date, keys(item)

WITH "https://api.stackexchange.com/2.2/questions?pagesize=100&order=desc&sort=creation&tagged=neo4j&site=stackoverflow&filter=!5-i6Zw8Y)4W7vpy91PMYsKM-k9yzEsSC1_Uxlf" AS url

CALL apoc.load.json(url,'$.items.owner.name') YIELD value

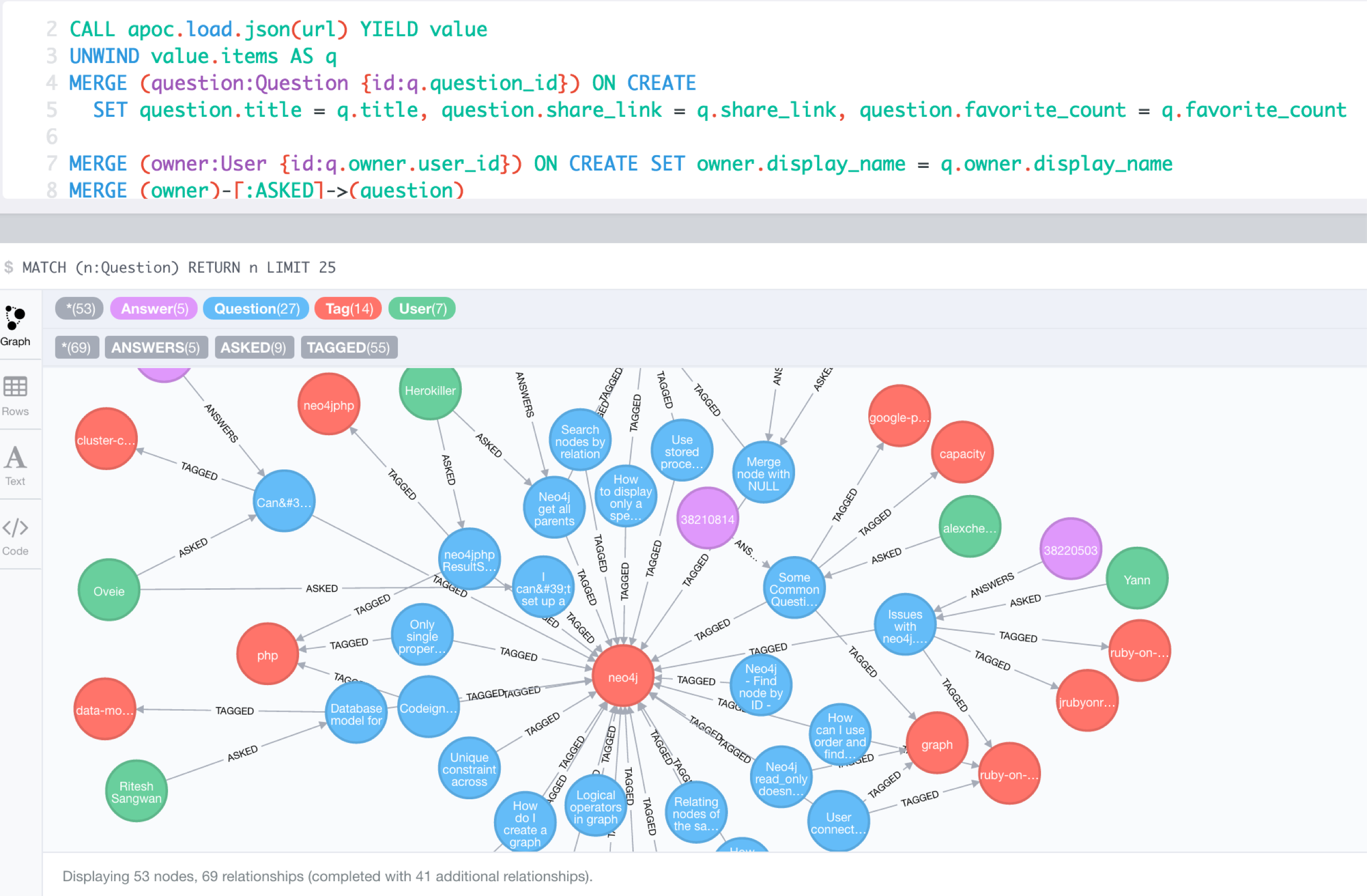

RETURN name, count(*);Combined with the cypher query from the original blog post it’s easy to create the full Neo4j graph of those entities.

We filter the original poster last, b/c deleted users have no user_id anymore.

WITH "https://api.stackexchange.com/2.2/questions?pagesize=100&order=desc&sort=creation&tagged=neo4j&site=stackoverflow&filter=!5-i6Zw8Y)4W7vpy91PMYsKM-k9yzEsSC1_Uxlf" AS url

CALL apoc.load.json(url) YIELD value

UNWIND value.items AS q

MERGE (question:Question {id:q.question_id}) ON CREATE

SET question.title = q.title, question.share_link = q.share_link, question.favorite_count = q.favorite_count

FOREACH (tagName IN q.tags | MERGE (tag:Tag {name:tagName}) MERGE (question)-[:TAGGED]->(tag))

FOREACH (a IN q.answers |

MERGE (question)<-[:ANSWERS]-(answer:Answer {id:a.answer_id})

MERGE (answerer:User {id:a.owner.user_id}) ON CREATE SET answerer.display_name = a.owner.display_name

MERGE (answer)<-[:PROVIDED]-(answerer)

)

WITH * WHERE NOT q.owner.user_id IS NULL

MERGE (owner:User {id:q.owner.user_id}) ON CREATE SET owner.display_name = q.owner.display_name

MERGE (owner)-[:ASKED]->(question)

Load JSON from Twitter (with additional parameters)

With apoc.load.jsonParams you can send additional headers or payload with your JSON GET request, e.g. for the Twitter API:

Configure Bearer and Twitter Search Url token in neo4j.conf

apoc.static.twitter.bearer=XXXX apoc.static.twitter.url=https://api.twitter.com/1.1/search/tweets.json?count=100&result_type=recent&lang=en&q=

CALL apoc.static.getAll("twitter") yield value AS twitter

CALL apoc.load.jsonParams(twitter.url + "oscon+OR+neo4j+OR+%23oscon+OR+%40neo4j",{Authorization:"Bearer "+twitter.bearer},null) yield value

UNWIND value.statuses as status

WITH status, status.user as u, status.entities as e

RETURN status.id, status.text, u.screen_name, [t IN e.hashtags | t.text] as tags, e.symbols, [m IN e.user_mentions | m.screen_name] as mentions, [u IN e.urls | u.expanded_url] as urlsGeoCoding Example

Example for reverse geocoding and determining the route from one to another location.

WITH

"21 rue Paul Bellamy 44000 NANTES FRANCE" AS fromAddr,

"125 rue du docteur guichard 49000 ANGERS FRANCE" AS toAddr

call apoc.load.json("http://www.yournavigation.org/transport.php?url=http://nominatim.openstreetmap.org/search&format=json&q=" + replace(fromAddr, ' ', '%20')) YIELD value AS from

WITH from, toAddr LIMIT 1

call apoc.load.json("http://www.yournavigation.org/transport.php?url=http://nominatim.openstreetmap.org/search&format=json&q=" + replace(toAddr, ' ', '%20')) YIELD value AS to

CALL apoc.load.json("https://router.project-osrm.org/viaroute?instructions=true&alt=true&z=17&loc=" + from.lat + "," + from.lon + "&loc=" + to.lat + "," + to.lon ) YIELD value AS doc

UNWIND doc.route_instructions as instruction

RETURN instructionLoad JDBC

Overview: Database Integration

Data Integration is an important topic. Reading data from relational databases to create and augment data models is a very helpful exercise.

With apoc.load.jdbc you can access any database that provides a JDBC driver, and execute queries whose results are turned into streams of rows.

Those rows can then be used to update or create graph structures.

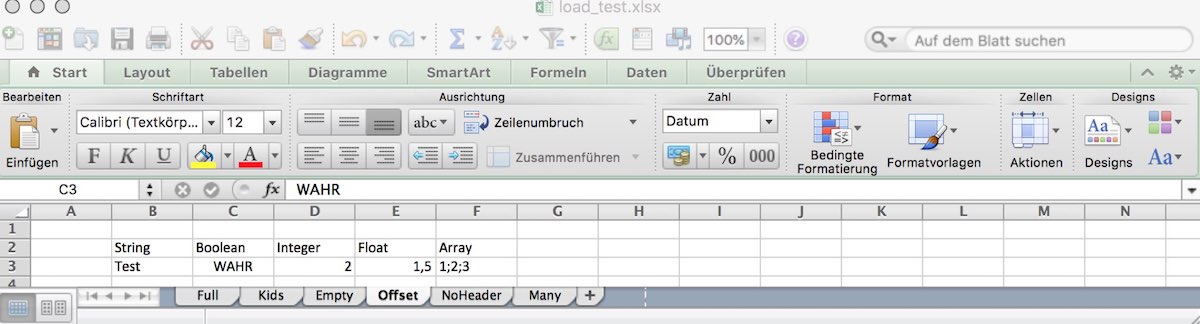

| type | qualified name | description |

|---|---|---|

procedure |

|

apoc.load.xls('url','selector',{config}) YIELD lineNo, list, map - load XLS fom URL as stream of row values, config contains any of: {skip:1,limit:5,header:false,ignore:['tmp'],arraySep:';',mapping:{years:{type:'int',arraySep:'-',array:false,name:'age',ignore:false}} |

procedure |

|

apoc.load.csv('url',{config}) YIELD lineNo, list, map - load CSV fom URL as stream of values, |

To simplify the JDBC URL syntax and protect credentials, you can configure aliases in conf/neo4j.conf:

apoc.jdbc.myDB.url=jdbc:derby:derbyDB

CALL apoc.load.jdbc('jdbc:derby:derbyDB','PERSON')

becomes

CALL apoc.load.jdbc('myDB','PERSON')

The 3rd value in the apoc.jdbc.<alias>.url= effectively defines an alias to be used in apoc.load.jdbc('<alias>',….

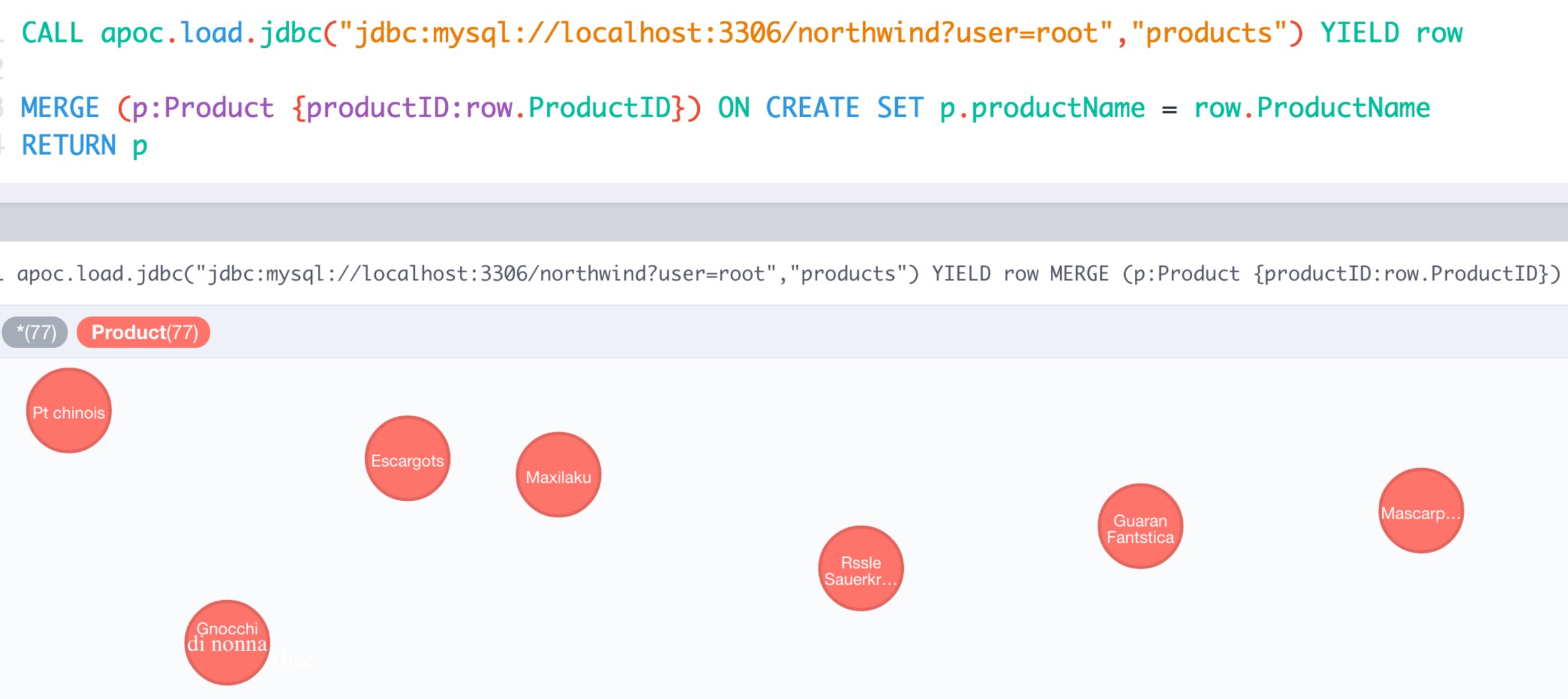

MySQL Example

Northwind is a common example set for relational databases, which is also covered in our import guides, e.g. :play northwind graph in the Neo4j browser.

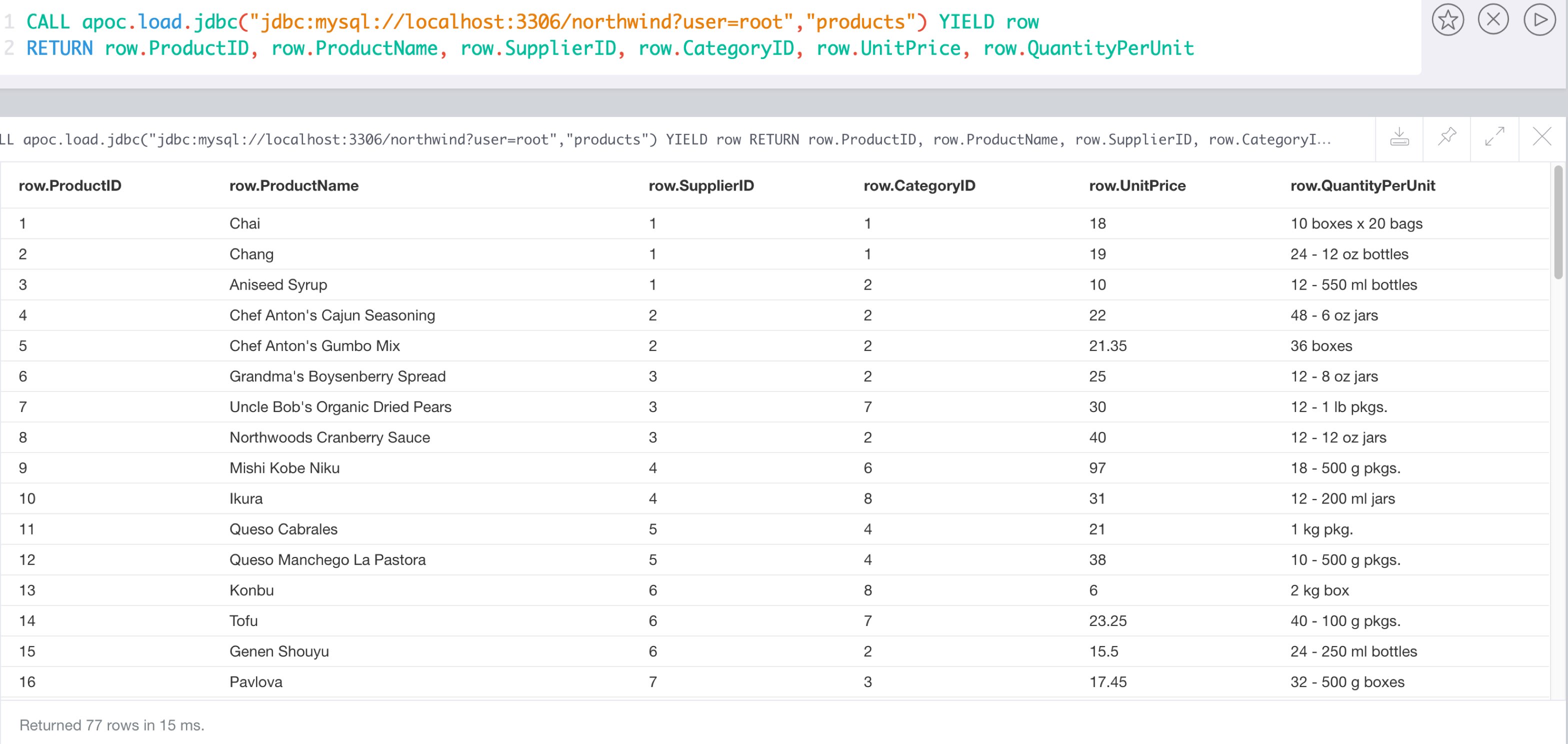

MySQL Northwind Data

select count(*) from products; +----------+ | count(*) | +----------+ | 77 | +----------+ 1 row in set (0,00 sec)

describe products; +-----------------+---------------+------+-----+---------+----------------+ | Field | Type | Null | Key | Default | Extra | +-----------------+---------------+------+-----+---------+----------------+ | ProductID | int(11) | NO | PRI | NULL | auto_increment | | ProductName | varchar(40) | NO | MUL | NULL | | | SupplierID | int(11) | YES | MUL | NULL | | | CategoryID | int(11) | YES | MUL | NULL | | | QuantityPerUnit | varchar(20) | YES | | NULL | | | UnitPrice | decimal(10,4) | YES | | 0.0000 | | | UnitsInStock | smallint(2) | YES | | 0 | | | UnitsOnOrder | smallint(2) | YES | | 0 | | | ReorderLevel | smallint(2) | YES | | 0 | | | Discontinued | bit(1) | NO | | b'0' | | +-----------------+---------------+------+-----+---------+----------------+ 10 rows in set (0,00 sec)

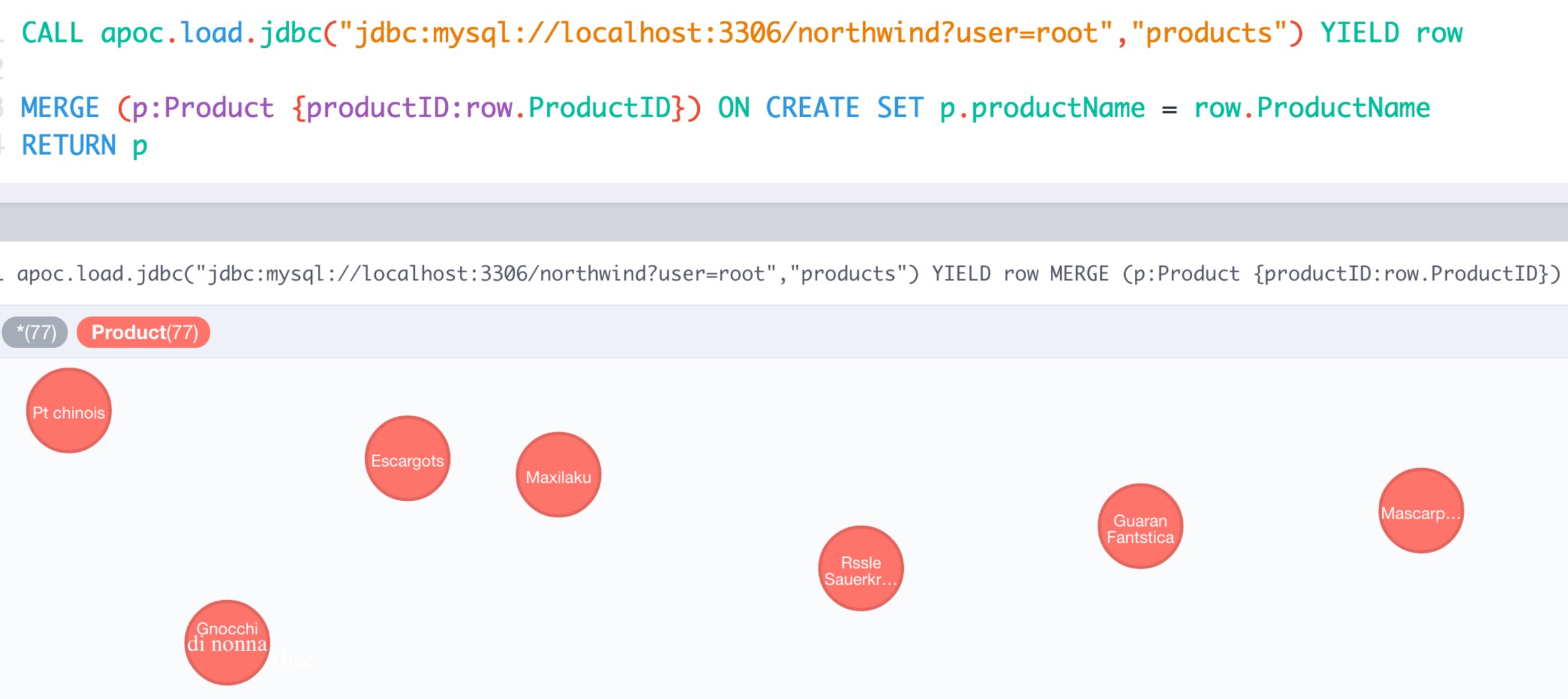

Load JDBC Examples

cypher CALL apoc.load.driver("com.mysql.jdbc.Driver");with "jdbc:mysql://localhost:3306/northwind?user=root" as url

cypher CALL apoc.load.jdbc(url,"products") YIELD row

RETURN count(*);+----------+ | count(*) | +----------+ | 77 | +----------+ 1 row 23 ms

with "jdbc:mysql://localhost:3306/northwind?user=root" as url

cypher CALL apoc.load.jdbc(url,"products") YIELD row

RETURN row limit 1;+--------------------------------------------------------------------------------+

| row |

+--------------------------------------------------------------------------------+

| {UnitPrice -> 18.0000, UnitsOnOrder -> 0, CategoryID -> 1, UnitsInStock -> 39} |

+--------------------------------------------------------------------------------+

1 row

10 ms

Load JDBC with params Examples

with "select firstname, lastname from employees where firstname like ? and lastname like ?" as sql

cypher call apoc.load.jdbcParams("northwind", sql, ['F%', '%w']) yield row

return row

JDBC pretends positional "?" for parameters, so the third apoc parameter has to be an array with values coherent with that positions. In case of 2 parameters, firstname and lastname ['firstname-position','lastname-position']

Load data in transactional batches

You can load data from jdbc and create/update the graph using the query results in batches (and in parallel).

CALL apoc.periodic.iterate('

call apoc.load.jdbc("jdbc:mysql://localhost:3306/northwind?user=root","company")',

'CREATE (p:Person) SET p += value', {batchSize:10000, parallel:true})

RETURN batches, totalCassandra Example

Setup Song database as initial dataset

curl -OL https://raw.githubusercontent.com/neo4j-contrib/neo4j-cassandra-connector/master/db_gen/playlist.cql curl -OL https://raw.githubusercontent.com/neo4j-contrib/neo4j-cassandra-connector/master/db_gen/artists.csv curl -OL https://raw.githubusercontent.com/neo4j-contrib/neo4j-cassandra-connector/master/db_gen/songs.csv $CASSANDRA_HOME/bin/cassandra $CASSANDRA_HOME/bin/cqlsh -f playlist.cql

Download the Cassandra JDBC Wrapper, and put it into your $NEO4J_HOME/plugins directory.

Add this config option to $NEO4J_HOME/conf/neo4j.conf to make it easier to interact with the cassandra instance.

apoc.jdbc.cassandra_songs.url=jdbc:cassandra://localhost:9042/playlist

Restart the server.

Now you can inspect the data in Cassandra with.

CALL apoc.load.jdbc('cassandra_songs','artists_by_first_letter') yield row

RETURN count(*);╒════════╕ │count(*)│ ╞════════╡ │3605 │ └────────┘

CALL apoc.load.jdbc('cassandra_songs','artists_by_first_letter') yield row

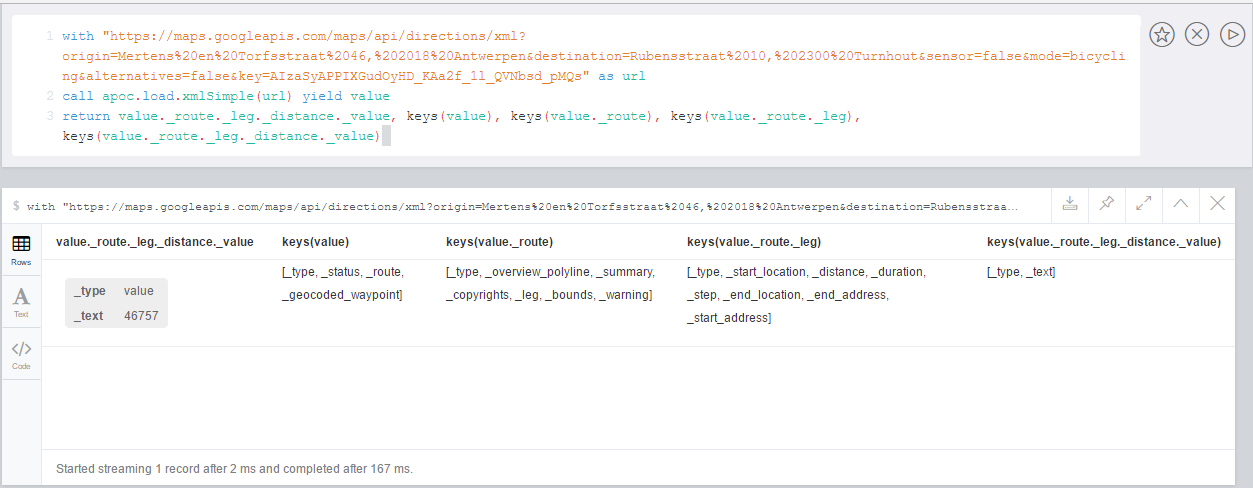

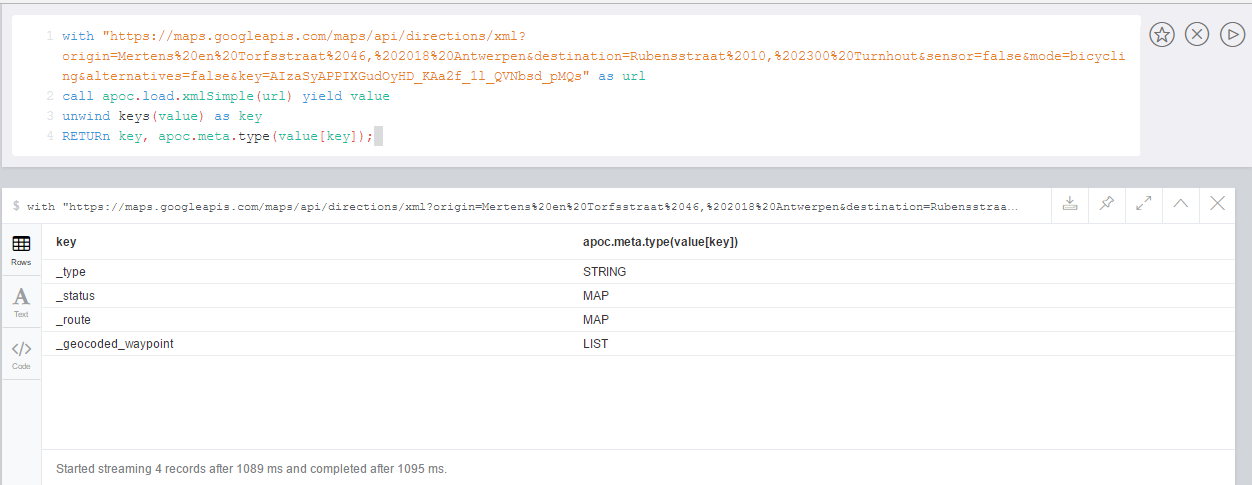

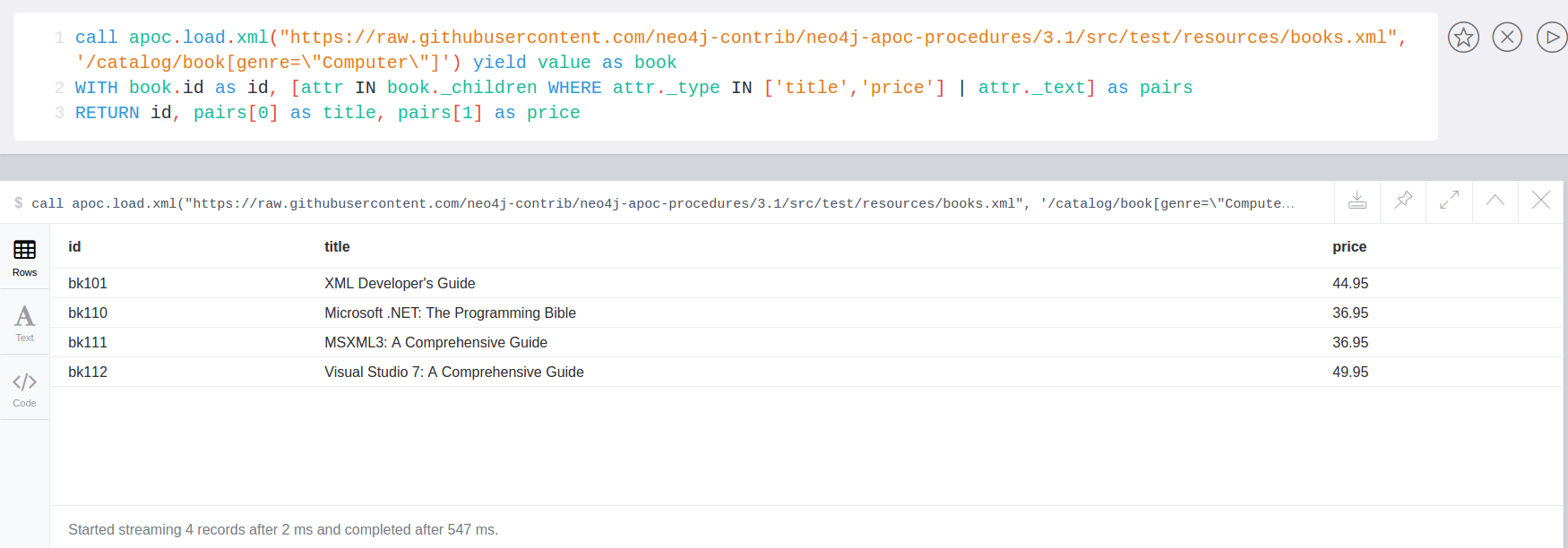

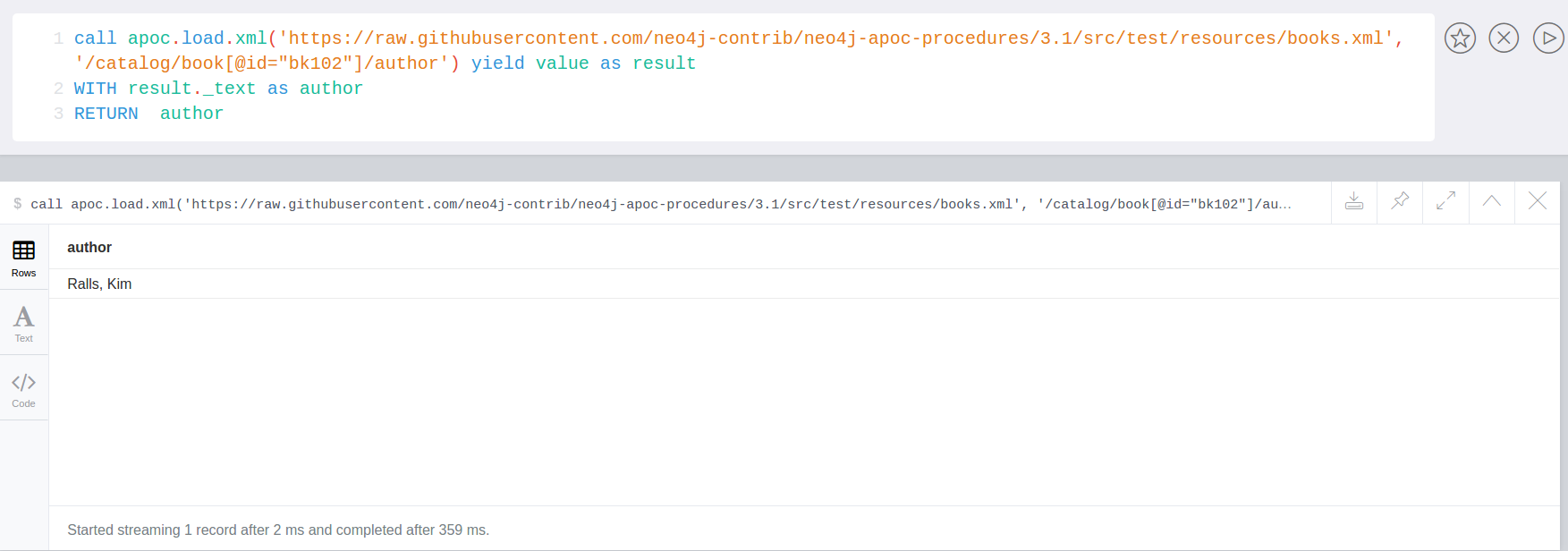

RETURN row LIMIT 5;CALL apoc.load.jdbc('cassandra_songs','artists_by_first_letter') yield row